The RX 580, as we learned in the review process, isn’t all that different from its origins in the RX 480. The primary difference is in voltage and frequency afforded to the GPU proper, with other changes manifesting in maturation of the process over the past year of manufacturing. This means most optimizations are relegated to power (when idle – not under load) and frequency headroom. Gains on the new cards are not from anything fancy – just driving more power through under load.

Still, we were curious as to whether AMD’s drivers would permit cross-RX series multi-GPU. We decided to throw an MSI RX 580 Gaming X and MSI RX 480 Gaming X into a configuration to get things close, then see what’d happen.

The short of it is that this works. There is no explicit inhibitor built in to forbid users from running CrossFire with RX 400 and RX 500 series cards, as long as you’re doing 470/570 or 480/580. The GPU is the same, and frequency will just be matched to the slowest card, for the most part.

We think this will be a common use case, too. It makes sense: If you’re a current owner of an RX 480 and have been considering CrossFire (though we didn’t necessarily recommend it in previous content), the RX 580 will make the most sense for a secondary GPU. Well, primary, really – but you get the idea. The RX 400 series cards will see EOL and cease production in short order, if not already, which means that prices will stagnate and then skyrocket. That’s just what retailers do. Buying a 580, then, makes far more sense if dying for a CrossFire configuration, and you could even move the 580 to the top slot for best performance in single-GPU scenarios.

And there will be a lot of single-GPU scenarios. In our history over the past few years, there has not been a single time when GamersNexus has recommended either SLI or CrossFire for wide-reaching gaming use cases. It’s phenomenal in very specific, targeted games, but the additional work and compatibility / scaling issues at large just aren’t worth the hassle. It’s worth revisiting, though; we haven’t tested multi-GPU in 2017, and this marks our first go at it.

We’re using our standardized GPU benchmark platform for these tests, which we’ve also recently deployed for GTX 1080 Ti reviews and RX 580 & RX 570 reviews.

Approach

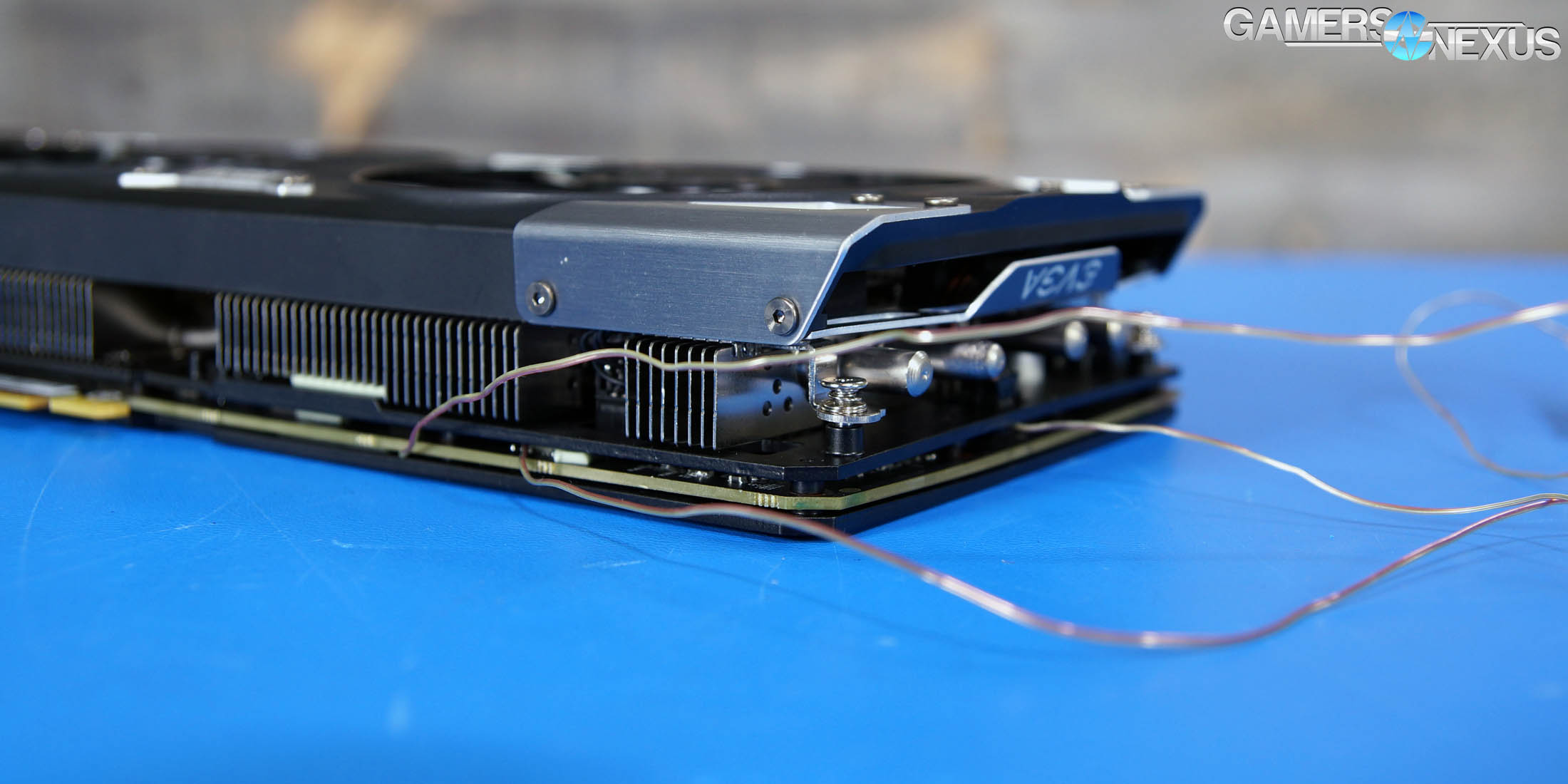

For this test, we’re configuring the RX 580 & 480 Gaming X 8GB cards in CrossFire in our Z270 test bench platform (defined further below). The RX 580 Gaming X takes top slot, but both cards will be limited by the slowest link – that’d be the 480 Gaming X, though not by that much. The main question was whether this would even work, and we quickly learned that the answer is “yes.” The next question was whether scaling was actually worth it, as opposed to instead purchasing a single, more powerful GPU, whether that’s 10-series or future Vega.

GPU Testing Methodology

For our benchmarks today, we’re using a fully rebuilt GPU test bench for 2017. This is our first full set of GPUs for the year, giving us an opportunity to move to an i7-7700K platform that’s clocked higher than our old GPU test bed. For all the excitement that comes with a new GPU test bench and a clean slate to work with, we also lose some information: Our old GPU tests are completely incomparable to these results due to a new set of numbers, completely new testing methodology, new game settings, and new games being tested with. DOOM, for instance, now has a new test methodology behind it. We’ve moved to Ultra graphics settings with 0xAA and async enabled, also dropping OpenGL entirely in favor of Vulkan + more Dx12 tests.

We’ve also automated a significant portion of our testing at this point, reducing manual workload in favor of greater focus on analytics.

Driver version 378.78 (press-ready drivers for 1080 Ti, provided by nVidia) was used for all nVidia devices. Version 17.10.1030-B8 was used for AMD (press drivers).

A separate bench is used for game performance and for thermal performance.

Thermal Test Bench

Our test methodology for the is largely parallel to our EVGA VRM final torture test that we published late last year. We use logging software to monitor the NTCs on EVGA’s ICX card, with our own calibrated thermocouples mounted to power components for non-ICX monitoring. Our thermocouples use an adhesive pad that is 1/100th of an inch thick, and does not interfere in any meaningful way with thermal transfer. The pad is a combination of polyimide and polymethylphenylsiloxane, and the thermocouple is a K-type hooked up to a logging meter. Calibration offsets are applied as necessary, with the exact same thermocouples used in the same spots for each test.

Torture testing used Kombustor's 'Furry Donut' testing, 3DMark, and a few games (to determine auto fan speeds under 'real' usage conditions, used later for noise level testing).

Our tests apply self-adhesive, 1/100th-inch thick (read: laser thin, does not cause "air gaps") K-type thermocouples directly to the rear-side of the PCB and to hotspot MOSFETs numbers 2 and 7 when counting from the bottom of the PCB. The thermocouples used are flat and are self-adhesive (from Omega), as recommended by thermal engineers in the industry -- including Bobby Kinstle of Corsair, whom we previously interviewed.

K-type thermocouples have a known range of approximately 2.2C. We calibrated our thermocouples by providing them an "ice bath," then providing them a boiling water bath. This provided us the information required to understand and adjust results appropriately.

Because we have concerns pertaining to thermal conductivity and impact of the thermocouple pad in its placement area, we selected the pads discussed above for uninterrupted performance of the cooler by the test equipment. Electrical conductivity is also a concern, as you don't want bare wire to cause an electrical short on the PCB. Fortunately, these thermocouples are not electrically conductive along the wire or placement pad, with the wire using a PTFE coating with a 30 AWG (~0.0100"⌀). The thermocouples are 914mm long and connect into our dual logging thermocouple readers, which then take second by second measurements of temperature. We also log ambient, and apply an ambient modifier where necessary to adjust test passes so that they are fair.

The response time of our thermocouples is 0.15s, with an accompanying resolution of 0.1C. The laminates arae fiberglass-reinforced polymer layers, with junction insulation comprised of polyimide and fiberglass. The thermocouples are rated for just under 200C, which is enough for any VRM testing (and if we go over that, something will probably blow, anyway).

To avoid EMI, we mostly guess-and-check placement of the thermocouples. EMI is caused by power plane PCBs and inductors. We were able to avoid electromagnetic interference by routing the thermocouple wiring right, toward the less populated half of the board, and then down. The cables exit the board near the PCI-e slot and avoid crossing inductors. This resulted in no observable/measurable EMI with regard to temperature readings.

We decided to deploy AIDA64 and GPU-Z to measure direct temperatures of the GPU and the CPU (becomes relevant during torture testing, when we dump the CPU radiator's heat straight into the VRM fan). In addition to this, logging of fan speeds, VID, vCore, and other aspects of power management were logged. We then use EVGA's custom Precision build to log the thermistor readings second by second, matched against and validated between our own thermocouples.

The primary test platform is detailed below:

| GN Test Bench 2015 | Name | Courtesy Of | Cost |

| Video Card | This is what we're testing | - | - |

| CPU | Intel i7-5930K CPU 3.8GHz | iBUYPOWER | $580 |

| Memory | Corsair Dominator 32GB 3200MHz | Corsair | $210 |

| Motherboard | EVGA X99 Classified | GamersNexus | $365 |

| Power Supply | NZXT 1200W HALE90 V2 | NZXT | $300 |

| SSD | OCZ ARC100 Crucial 1TB | Kingston Tech. | $130 |

| Case | Top Deck Tech Station | GamersNexus | $250 |

| CPU Cooler | Asetek 570LC | Asetek | - |

Note also that we swap test benches for the GPU thermal testing, using instead our "red" bench with three case fans -- only one is connected (directed at CPU area) -- and an elevated standoff for the 120mm fat radiator cooler from Asetek (for the CPU) with Gentle Typhoon fan at max RPM. This is elevated out of airflow pathways for the GPU, and is irrelevant to testing -- but we're detailing it for our own notes in the future.

Game Bench

| GN Test Bench 2017 | Name | Courtesy Of | Cost |

| Video Card | This is what we're testing | - | - |

| CPU | Intel i7-7700K 4.5GHz locked | GamersNexus | $330 |

| Memory | GSkill Trident Z 3200MHz C14 | Gskill | - |

| Motherboard | Gigabyte Aorus Gaming 7 Z270X | Gigabyte | $240 |

| Power Supply | NZXT 1200W HALE90 V2 | NZXT | $300 |

| SSD | Plextor M7V Crucial 1TB | GamersNexus | - |

| Case | Top Deck Tech Station | GamersNexus | $250 |

| CPU Cooler | Asetek 570LC | Asetek | - |

BIOS settings include C-states completely disabled with the CPU locked to 4.5GHz at 1.32 vCore. Memory is at XMP1.

We communicated with both AMD and nVidia about the new titles on the bench, and gave each company the opportunity to ‘vote’ for a title they’d like to see us add. We figure this will help even out some of the game biases that exist. AMD doesn’t make a big showing today, but will soon. We are testing:

- Ghost Recon: Wildlands (built-in bench, Very High; recommended by nVidia)

- Sniper Elite 4 (High, Async, Dx12; recommended by AMD)

- For Honor (Extreme, manual bench as built-in is unrealistically abusive)

- Ashes of the Singularity (GPU-focused, High, Dx12)

- DOOM (Vulkan, Ultra, 0xAA, Async)

Synthetics:

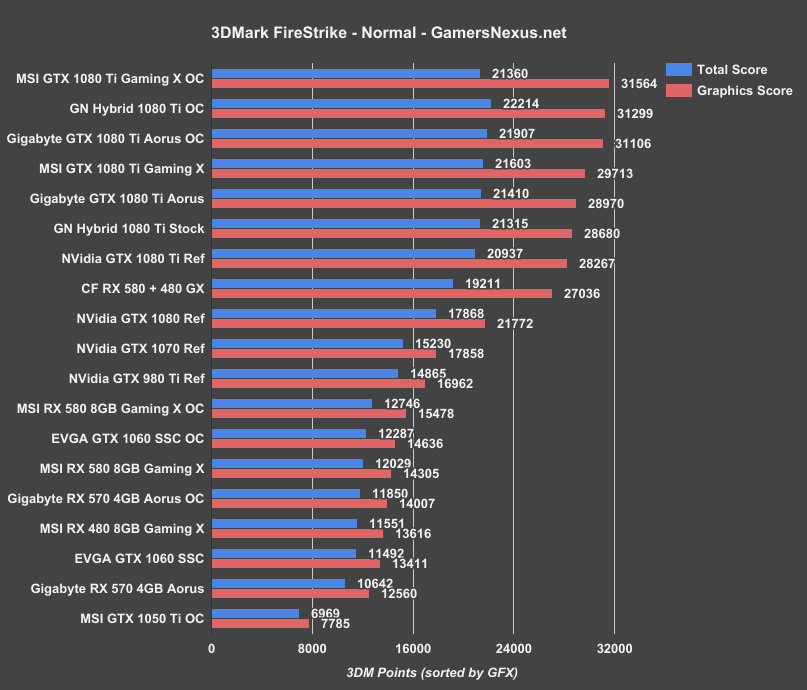

- 3DMark FireStrike

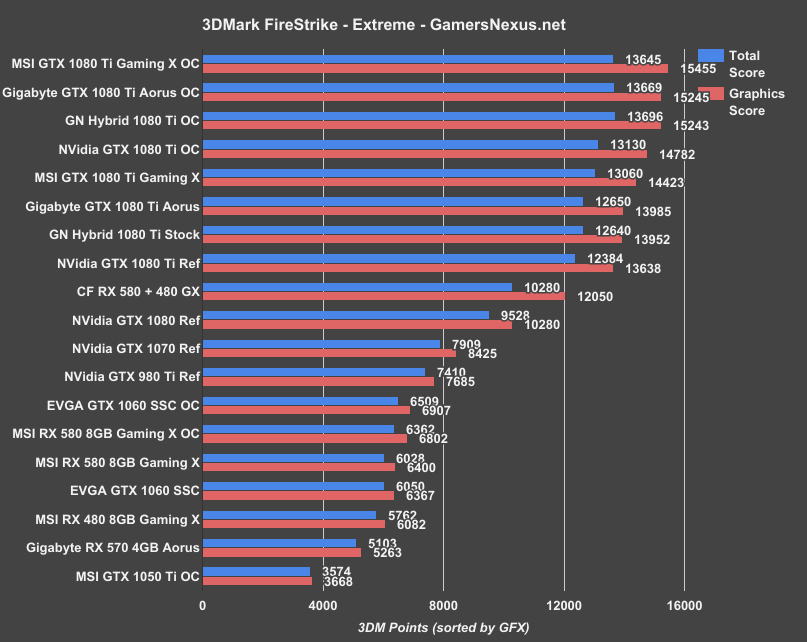

- 3DMark FireStrike Extreme

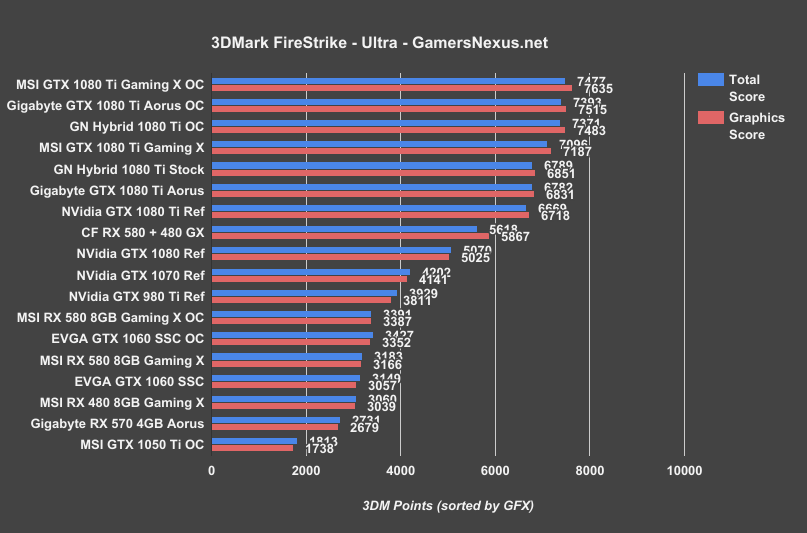

- 3DMark FireStrike Ultra

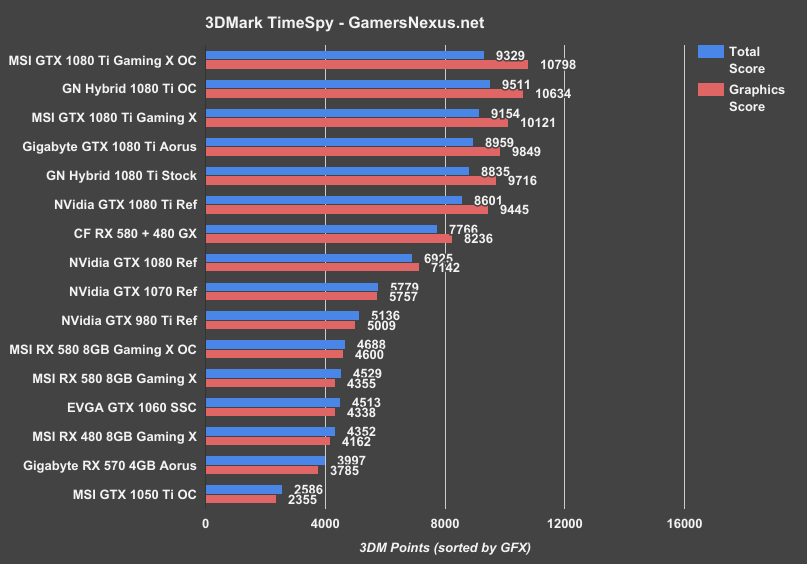

- 3DMark TimeSpy

For measurement tools, we’re using PresentMon for Dx12/Vulkan titles and FRAPS for Dx11 titles. OnPresent is the preferred output for us, which is then fed through our own script to calculate 1% low and 0.1% low metrics (defined here).

Power testing is taken at the wall. One case fan is connected, both SSDs, and the system is otherwise left in the "Game Bench" configuration.

Continue to Page 2 for CrossFire Power Draw & Synthetic tests.

CrossFire RX 580 & RX 480 Power Draw

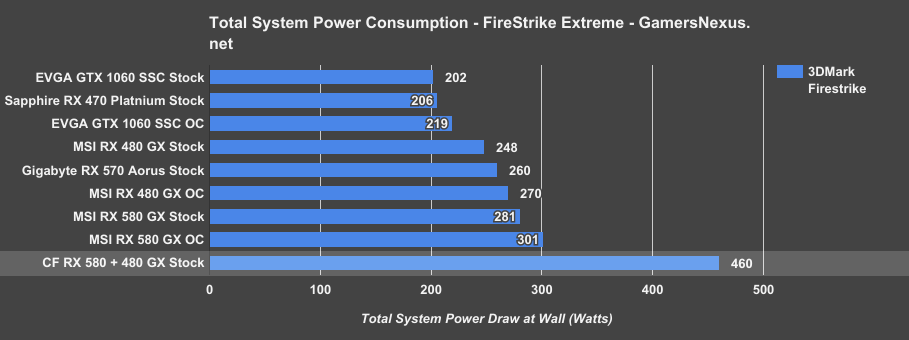

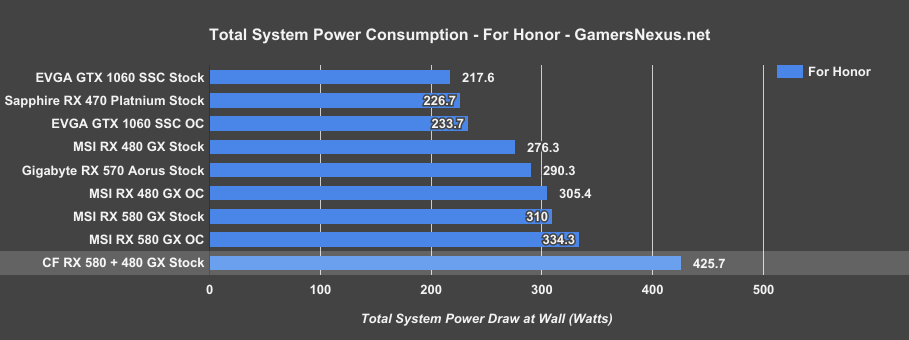

We’ll start this test by measuring total system power draw from the wall, just to get an idea of how much the consumption scales with multiple cards.

With 3DMark FireStrike Extreme, the CrossFire RX 580 and RX 480 Gaming X system is drawing 460W from the wall. For comparison, the RX 480 Gaming X stock system drew 248W, with the 580 stock system at 281. The CrossFire config is drawing about 64% more power than the single RX 580 Gaming X, or about 85% more power than the single RX 480 Gaming X.

With For Honor, our system power draw is at around 426W load. This profile has some power management issues, judging by fluctuations on the wall meter and by some tearing at 1440p and 4K, with fierce micro-stutter at 4K. Regardless, our power draw is about 37% more than the RX 580 Gaming X stock system, which draws 310W. We’re 54% higher in power draw than the RX 480 stock GPU.

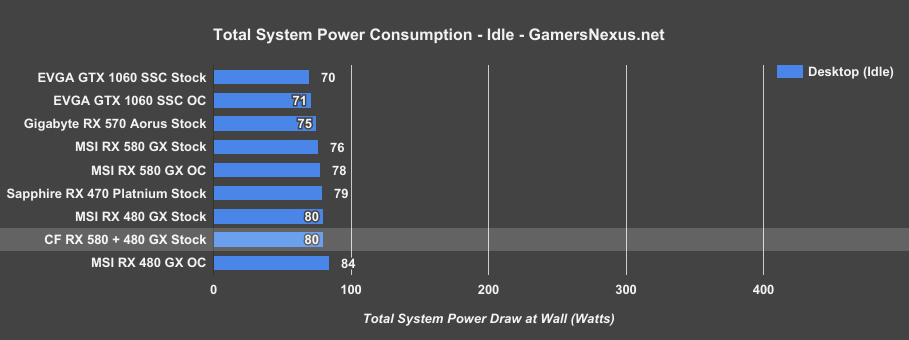

Idle power draw isn’t really affected in a hugely meaningful way. We’ve got a couple extra watts for LEDs and polling, but CrossFire doesn’t really engage on the desktop. We’re looking at around 80W idle wall draw.

3DMark FireStrike – CrossFire RX 580 & RX 480

3DMark TimeSpy – CrossFire RX 580 & RX 480

Continue to Page 3 for CrossFire FPS benchmarks.

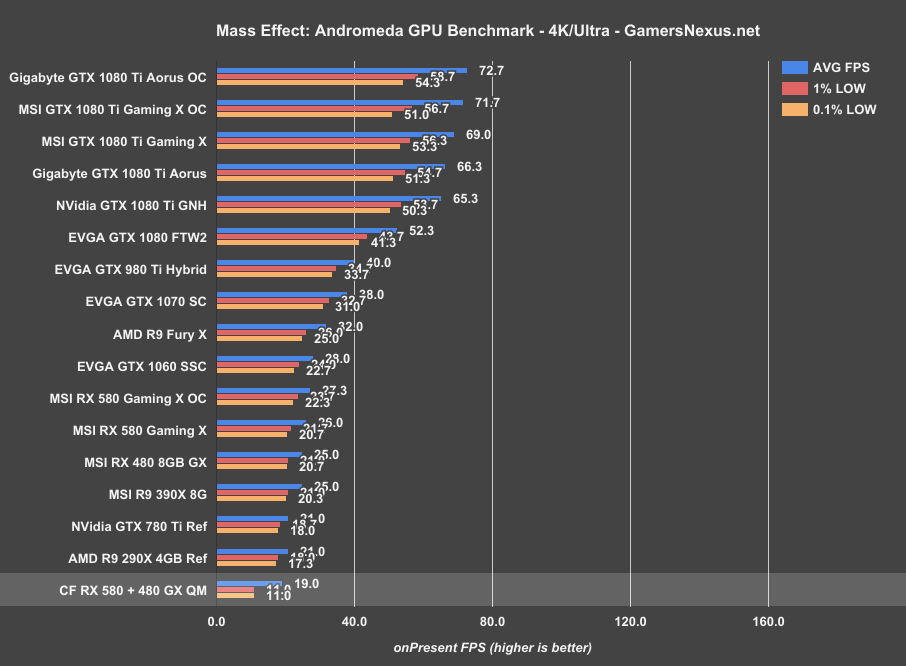

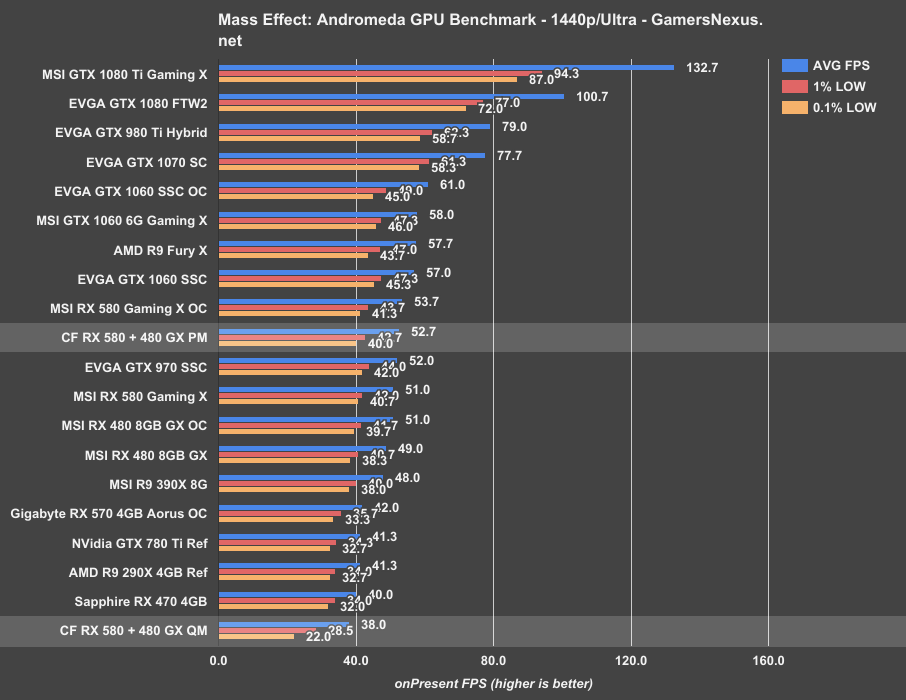

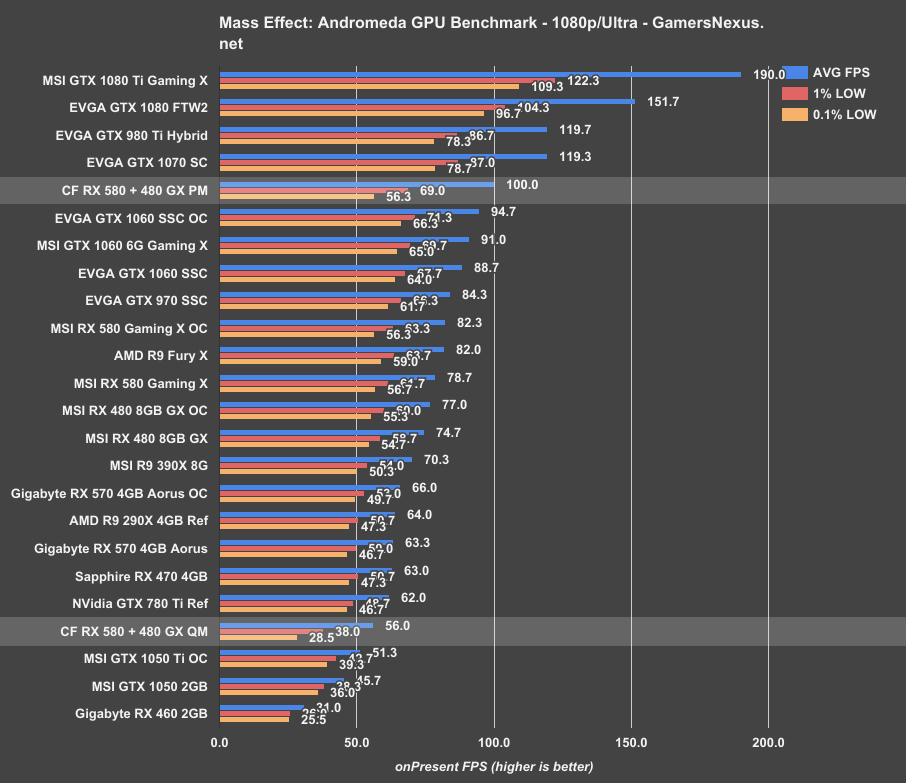

Mass Effect: Andromeda CrossFire Benchmark – 580 + 480 CF

We’re starting with Mass Effect: Andromeda for CrossFire. There’s a big challenge with Mass Effect that seems to be overlooked when considering multi-GPU: Despite folks proclaiming Andromeda’s multi-GPU support, there’s been limited discussion around the reason why – it’s sort of cheating. There is an option that enables by default when going multi-GPU, and it lowers graphics settings to reduce microstutter and improve framerate. The game doesn’t actually tell us which settings it’s lowering, it just does it – and that means that benchmarking in “performance mode” for multi-GPU does not produce comparable numbers to the single card benchmarks, since those tests will be more intensive.

We’ve actually got a few screenshots of performance mode versus quality mode for multi-GPU, where quality mode produces the same image quality as a single card, but with intense micro-stutter at higher resolutions or just generally bad framerates.

In these screenshots, you can see the differences between two scenes with Performance versus Quality mode. This is mostly evident in the change to ambient occlusion quality and local reflections, along with changes to depth shading and refractions. Places like door frames and shiny floors show the biggest change.

Here’s the problem: If you’re testing with performance mode enabled, it’ll produce the best performance for CrossFire – but it’s also cheating, because the single RX 580 or single RX 480 were producing different frames than the CrossFire solution. Quality mode, which is unchanged, is nigh unplayable. We’ll put both numbers on the charts anyway.

4K doesn’t look good for quality mode. We experienced intense, game-breaking micro-stutter that doesn’t even show-up in the already dismal framerate numbers. We’re at 19FPS average, behind both the single RX 480 and single RX 580. This isn’t the first time we’ve seen negative scaling in a game, and it’s pronounced here. You’d be way better off disabling CrossFire.

Quality Mode, producing the same frames as every other card, puts us at 38FPS AVG at 1440p – the worst on the chart, behind even the RX 470. There is intense negative scaling with CrossFire in this title; at least, until we switch to Performance Mode to cheat graphics quality lower. The game now pegs the RX 580 and RX 480 CF configuration at 54FPS AVG, tied with an overclocked RX 580 single-card config. We’re ahead of the RX 580 Gaming X single GPU stock by about 1-2FPS. Even with this mode, you’re far better off disabling this particular CrossFire configuration and running a single card. The graphics will be better and the framerate will be mostly equivalent. It makes no sense to use CrossFire here. Scaling is about 3% in average FPS, if that.

1080p looks about the same for Quality Mode, planting the CrossFire config below the RX 470 in average framerate, and far below it in frametime consistency. Scaling is -29% here. Switching to Performance Mode puts us at 100FPS AVG, with 1% lows at 69 and 0.1% lows at 56FPS. That’s finally showing some real scaling over a single RX 580 Gaming X, which operated at 78.7FPS AVG – but again, the single card was under heavier workload, so it’s debatable how much you can count that as true scaling if the image quality is not identical.

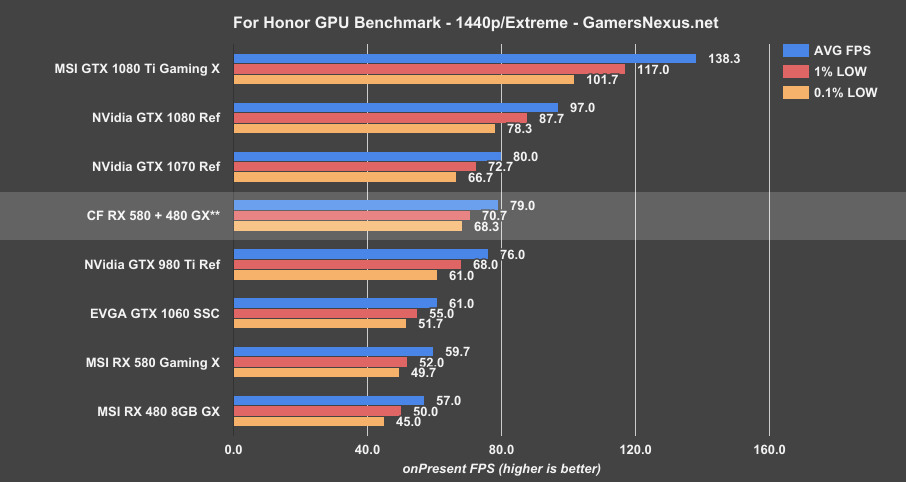

For Honor CrossFire: RX 580 + RX 480

For Honor is another game that has some interesting challenges: CrossFire performed better for us at lower resolutions, like 1440p and 1080p, but worse at 4K. 4K exhibited fierce micro-stuttering that would necessitate disabling CrossFire or lowering resolution, as it was utterly unplayable, despite not showing up as much in benchmark numbers. We’ll skip 4K results for that reason.

Moving instead to a more playable 1440p, we’re getting 79FPS AVG with 71 and 68FPS lows on the mixed CrossFire configuration, showing positive scaling over the single RX 580 Gaming X. The performance gain is about 32%, with an insignificant amount of tearing as a side effect. Scaling over a single RX 480 is about 39%. This puts the configuration between the 980 Ti and GTX 1070 reference card.

At 1080p, scaling is again positive, with a 120FPS AVG throughput and lows at or above 100FPS. The RX 580 Gaming X single card performs around 93FPS AVG, showing that the mixed CrossFire cards scale over a single 580 Gaming X by about 29%. We’re still below a single GTX 1070 reference card.

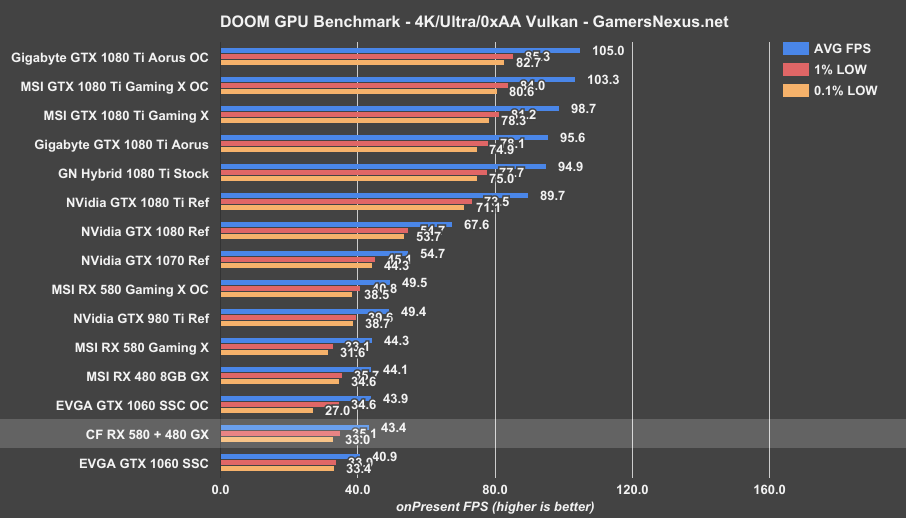

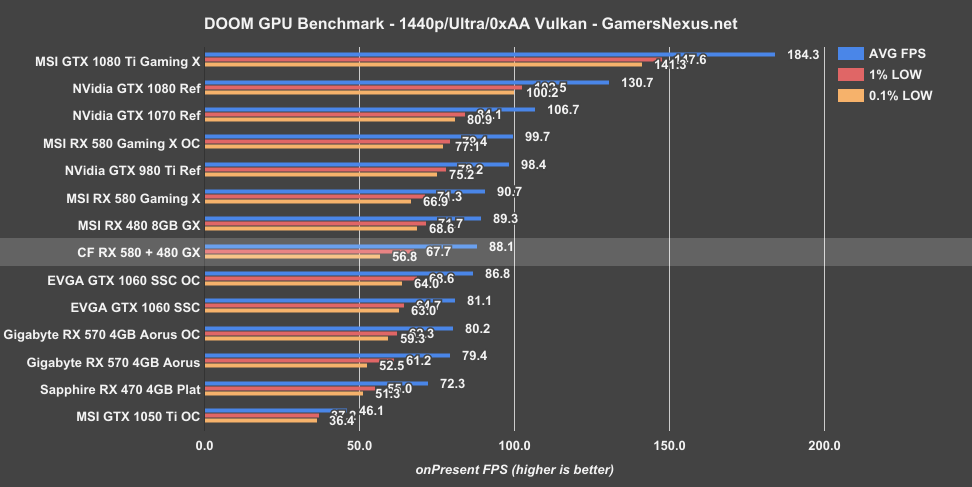

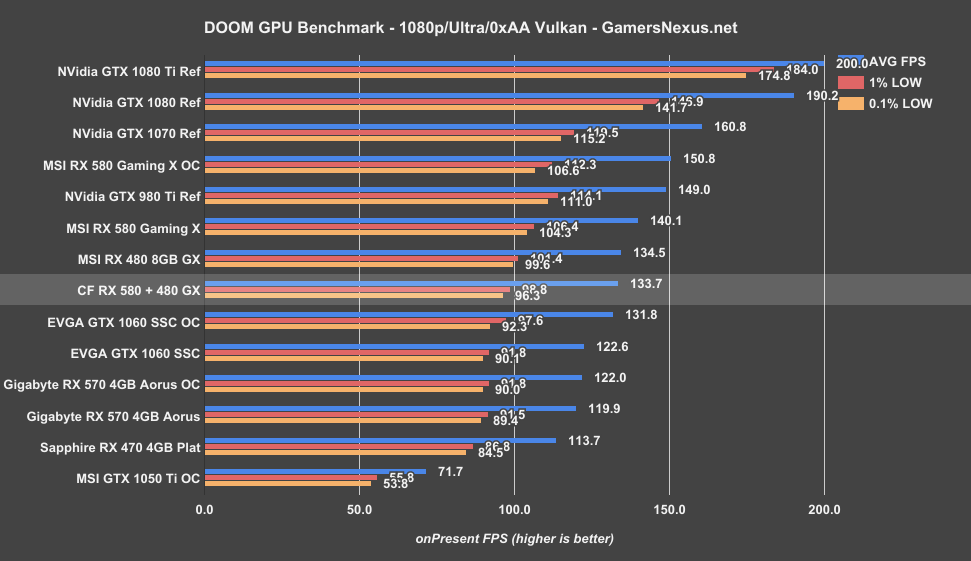

DOOM (Vulkan) CrossFire Benchmark: RX 580 + RX 480

DOOM is up next on the list, using Vulkan as the API of choice.

Vulkan, just like OpenGL, has never really delivered for multi-GPU users in DOOM. We’re not seeing any scaling here. At 4K, the RX 580 and CrossFire configuration are basically the same. That carries over to 1440p, where there’s slight overhead without any scaling to speak of. CrossFire devices – even if you were to use the same exact device – just don’t scale in this game. 1080p is no different. Slight overhead, worst case.

Ashes of the Singularity CrossFire Benchmark: RX 580 + RX 480

We enable the “multi-GPU” toggle for this test. Scaling is positive in averages – north of 40% -- but we saw poor frametime consistency in AOTS.

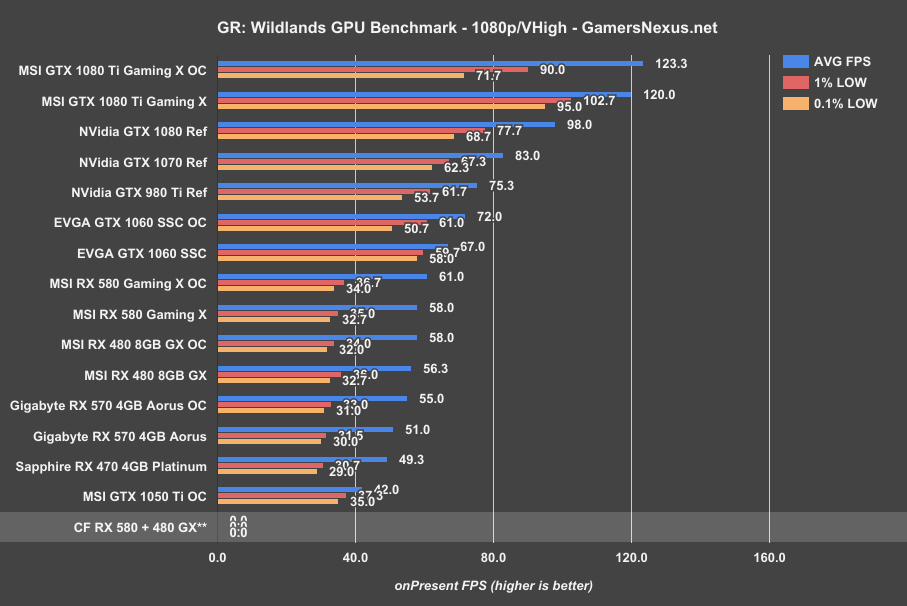

Ghost Recon: Wildlands CrossFire Benchmark

Ghost Recon: Wildlands is a little bit worse than lack of scaling – we just couldn’t even get the benchmark to start. The game hard crashed at all resolutions with our Very High test settings, earning the CrossFire RX 580 + 480 a solid DNF – they did not finish any tests with this game.

Sniper Elite 4 CrossFire Benchmark – 2x Scaling

Sniper Elite offers some redemption, though. At 4K with Dx12 and Async compute enabled, we’re seeing the CrossFire RX 580 and 480 Gaming X cards place at 79FPS AVG, with lows at 63 and 60. The RX 580 Gaming X single card operated at about 40FPS AVG, with lows linearly lower than the CrossFire config. That’s scaling of nearly 2x – we almost never see that in any games. Sniper Elite is one of the few titles that can make a case for multi-GPU, but it is surrounded by a games market that is otherwise mixed in multi-GPU readiness.

The 79FPS AVG of our mixed CrossFire configuration plants the cards ahead of a reference 1080, and about 8% behind the 1080 Ti Gaming X. Impressive performance. Rare, but impressive in this instance of Sniper 4.

Unfortunately, it’s not just a low-level API thing: We didn’t see any scaling with Vulkan, and Ashes of the Singularity posted scaling of about 46.5% in averages, with poor consistency overall. This has more to do with developers than with throwing some magical API toggle that makes multi-GPU good.

Conclusion: CrossFire RX 580 + RX 480 Worth It?

And RX 580 and RX 480 will work in CrossFire, so that’s the first part of this. It will run if you really wanted it. We don’t recommend it, though, just like we’ve never recommended SLI or CrossFire for gaming in the past. Maybe for very specific use cases, sure; if Sniper Elite is the only game you want to play, it’s a fantastic investment.

Unfortunately, Sniper Elite is surrounded by peers who can’t handle multi-GPU well. Mass Effect cheats multi-GPU into working by lowering graphics settings to prevent microstutter, which results in worse picture quality and identical performance to a single RX 580 card. You’d be better off running single-GPU, here. The same is true for Ghost Recon. For Honor scales OK at lighter workloads, but introduces intense microstutter at 4K. Ashes has frametime issues. DOOM (Vulkan/OpenGL) just doesn’t show scaling with multi-GPU.

This is the same case as it’s always been, in our coverage: You’d be better off buying a single, more powerful GPU than going multi-GPU, unless playing very specific games where it’s advantageous to have two cards. If there’s one game you’re playing a lot, look up CF benchmarks and figure out if it’ll benefit. Otherwise, the hassle isn’t worth it. Vega sounds good, in this regard, as does a GTX 10-series video card (1070, 1080, and 1080 Ti all mostly do better than CF X80 configurations).

It may not even be worth the investment if you already own one card – like the 480 – just depending on what games are most heavily played, and there’s no betting on developers to amp-up multi-GPU support anytime soon.

The config works, though. So that’s interesting.

Our main takeaway is that Sniper Elite 4 has incredible scaling. Good on them.

Editor-in-Chief: Steve Burke

Video Producer: Andrew Coleman