A frame's arrival on the display is predicated on an unseen pipeline of command processing within the GPU. The game's engine calls the shots and dictates what's happening instant-to-instant, and the GPU is tasked with drawing the triangles and geometry, textures, rendering lighting, post-processing effects, and dispatching the packaged frame to the display.

The process repeats dozens of times per second – ideally 60 or higher, as in 60 FPS – and is only feasible by joint efforts by GPU vendors (IHVs) and engine, tools, and game developers (ISVs). The canonical view of game graphics rendering can be thought of as starting with the geometry pipeline, where the 3-dimensional model is created. Eventually, lighting gets applied to the scene, textures and post-processing is applied, and the scene is compiled and “shipped” for the gamer's viewing. We'll walk through the GPU rendering and game graphics pipeline in this “how it works” article, with detailed information provided by nVidia Director of Technical Marketing Tom Petersen.

GPU Rendering Pipeline – Geometry

(Above: Tessellation applied to spheres)

The geometry pipe starts the process. The GPU begins assembling 3D models – that'd be characters, buildings, and other object assets – with polys and triangles, building the geometry that comprises the object. You've heard us talk about poly count before, like with Chris Roberts of the Star Citizen team, and this is precisely where that comes into play. We've also used terms like “geometric complexity,” which refers to objects and models which are densely-populated with polygons and sculpted detail.

(Above: An example of the camera's perception of 3D space)

The GPU begins assembling these independent models, each of which has its own coordinate system. Translation, rotation, and scaling are applied next (as in Photoshop, each of these transforms the subject in some way). This process positions vertices within the 3D world space, marking one of the first steps of assembling the finalized object placement in the frame. The GPU hasn't begun applying lighting or shading just yet and is still 100% focused on finalizing that geometry creation and placement. Once the vertices are dimensionally positioned, transforms are applied to vertices and geometric data, paving the way to the next process in the pipeline.

GPU Rendering Pipeline – Projection

In the above video, Petersen continues from geometric processing to lighting:

“After you've [created the geometry], you apply different geometric- or vertex-oriented transforms. You're going to do things like per-vertex lighting; what you're doing is making that world more elaborate, more descriptive of the model.

“Now, you've created this world – you have to figure out how to get it onto the screen. That's done using something called 'projection.' All of this is still happening in the geometry pipeline, but projection is the very last stage of vertices where we're sort of taking a camera and virtually pointing it at a position in the world, and then dealing with things like perspective. All we're doing is math – translating the geoeomtry effectively to a different coordinate space that is from the screen's perspective.”

The next function is culling of unnecessary data. Z-culling, for example, looks at the Z-axis of the scene and determines which data will go unused. If there's a lamp post that's partially obscured by a foreground object, like a car, the unseen elements of that lamp post will be “culled” from the pipe (Z-culling) to eliminate unnecessary workload on the GPU. The same is true for geometry which exits the Z-buffer or camera frustum (both explained here).

(Above: Clay rendering progression, clockwise, from geometry to full shading and post-FX. Source: Thinkchromatic.com)

Of this process, Petersen explains:

“You do things called 'clipping,' which is going to scissor-out the geometry that you really want to render. The last stage is converting this geometry to pixels. The process of pixelizing or rasterizing is... imagine you're going to sample across pixels on your screen now. There's another look-up that happens where you're saying, 'I want to go after the first pixel on the upper left; I'm going to project into my little, now-clipped geometry and figure out which primitives – pixels or lines or dots – are affecting that pixel.' By looking at which triangles are affecting a pixel, you can run a shader program. The shader program is tied to the geometry that's modifying that pixel.

“Summarizing – you kind of create this 3D world, apply FX like colorization and lighting at the global level, then you project it to your screen, but you're still in the geometric pipeline. Then you convert it to pixels, and while converting it to pixels, you apply elaborate effects – things like shadows, complex textures, and beautifulization – that's all happening in the pixel shader. At the end of the day, it's all about the transformation from 3D geometry in the geometry pipeline, and then – in the pixel shader pipeline – converting it to individual dots.”

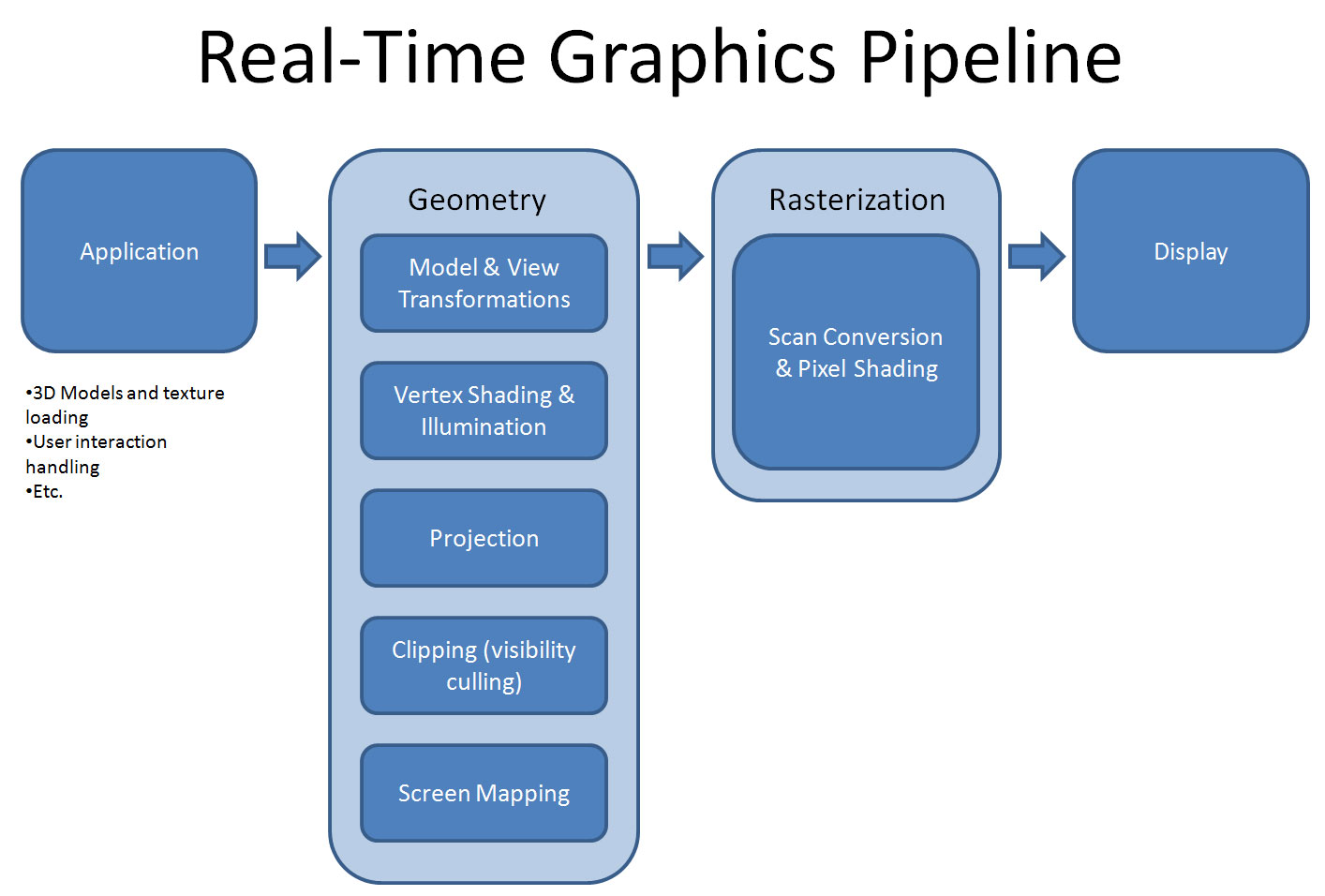

Canonical View of the Pipeline

(Above: Simplified GPU rendering pipeline. Source: CG Channel)

The move to increasingly programmable GPUs has changed the canonical view of the pipeline, largely thanks to increased developer effort in managing shader programs and pre-GPU graphics management. Tessellation and transforms are performed in-step with work done by shader programs, which eventually lead into the geometry shader for deformations. Read our previous Epic Games technical interview to learn about deforms and tessellation.

All of this is cycled through the same SM on the hardware, which is now driven by developer-made software tools. The geometry pipeline concludes with fixed function hardware that manages projection, transforms, and clipping, and finalizes with an array that's indexed and rastered into.

Petersen noted that this is all different from “how it used to be,”

“That's very different, by the way, from the where it used to be, which was every transform had fixed functions. Initially, it was all just hardware and a lot of it was done on the CPU. Then the idea of a vertex processor or geometry processor kind of emerged, but it was fixed function again. Over time, somebody said, 'you know, if we could have program do that – wouldn't that be cool? It wouldn't have to be so flat.'

“Our pipeline has become far more programmable over the years and effects have matured as programmability has enabled them."

Examples of Graphics Elements that Use VRAM

Textures are the easiest example of a game graphics element that consumes VRAM. This is demonstrated readily in our Black Ops III graphics optimization guide (or GTA V optimization guide). Textures are stored as two-dimensional arrays that are flattened in memory, then applied to objects. When the artist creates a texture map, that map is created in two-dimension space (Photoshop and Allegorithmic are common tools) and assigned to the object.

Textures are mapped on a per-pixel level to the geometry, so color is individually determined for each pixel eventually projected to the screen. This is all mapped to video memory (whether that's GDDR5, GDDR5X, or HBM) so that the GPU can locally process data as it's requested by the game.

We asked Petersen about challenges in this process:

“You can think about the real challenge of this pipeline [being that] you want it all to flow. You don't really calculate intermediate data structures and store them in memory. You want the whole thing to just be [that] a vertex comes in, it gets transformed, and then a vertex goes out, it gets transformed. This whole thing is basically designed to be a one-in and one-out across vastly parallel structures.”

To learn more about the pipeline, watch our above video interview with Tom Petersen. We'd also recommend our interview with Allegorithmic and GPU limitations and graphics creation. Our interview with Epic Games talks tessellation, and a previous interview with Crytek talks physically-based rendering.

We previously filmed one of these interviews with AMD. You can find that content here.

Editorial: Steve “Lelldorianx” Burke

Film: Keegan “HornetSting” Gallick

Video Editing: Andrew “ColossalCake” Coleman