At PAX Prime, thanks to the folks at Valve and HTC, we got another first-hand experience with what may be the best option in personal VR to-date: the Vive.

Our first encounter with the Valve/HTC Vive was at GDC 2015, the headset’s first showcase, and we were limited on information and recording permission. HTC and nVidia brought the Vive to PAX Prime this year, the former bringing us into their conference room for another lengthy, hands-on demonstration. We took the opportunity to talk tech with the HTC team, learning all about how Valve and HTC’s VR solution works, the VR pipeline, latencies and resolutions, wireless throughput limitations, and more. The discussion was highly technical – right up our alley – and greatly informed us on the VR process.

See a video of the Vive in-use below. We compare our individual experiences and talk tech in the accompanying video:

The Vive uses two 1080x1200 OLED screens packed behind specially-crafted lenses, a similar top-level deployment for the Rift and other competitors. The refresh rate for the displays is 90Hz – found in the Rift as well. The unit is comprised of a wired head-mounted display (HMD), two “lighthouses” for active environment scanning, and a pair of wireless, hand-held controllers to aid interaction with the VR environment.

So, what we knew before going into our demo session was that there were several VR solutions and they shared many similar features. What we wanted to learn was exactly how this rendition, the Vive, works. Here’s what we learned.

The PC-VR Pipeline

It all starts with the PC. The Steam VR API has to be installed in order for the system to function. It's our understanding that this API is available for Windows, Linux, and Mac operating systems. The system we were using in the demo was a Windows box.

The API connects the game software to the hardware so that the appropriate signals are sent to the headset and controllers. From there, the signals travel across a combo cable that is comprised of a power supply cable, an HDMI cable, and a USB cable. This cable trails across the floor to the user and, in our demo, had about 3 meters of slack to allow for ample movement around the room but not so much that it could be easily tripped over.

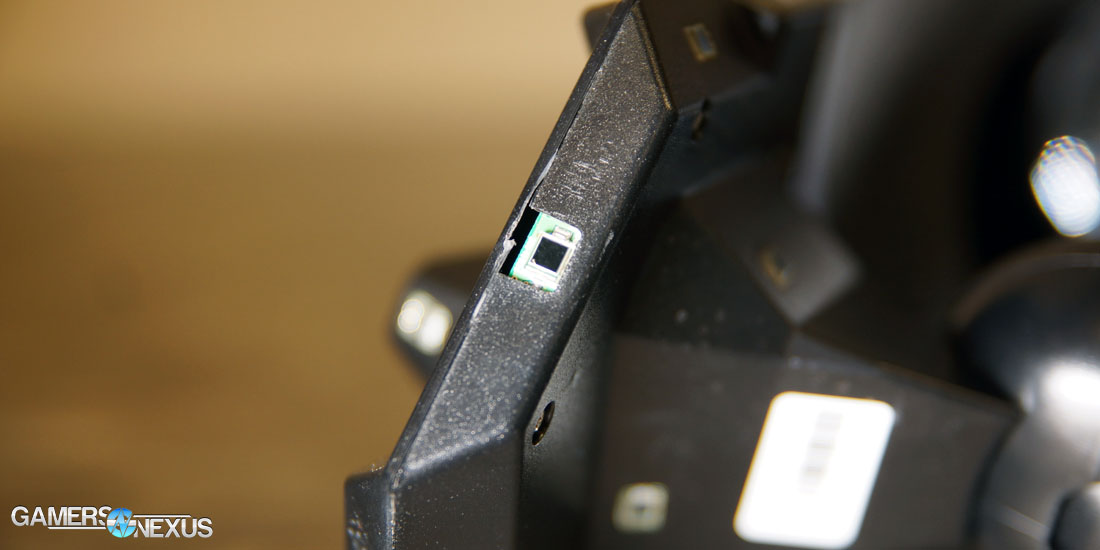

The cables then travel up the back of the user, over the head, and plug in to the top of the headset. In our demo, there was a slight modification to this pattern in that the USB cable actually plugged in to a USB hub attached to the top of the headset. The purpose of the hub was to allow for connection of the two USB wireless dongles for the hand-held controller communication (1 USB RF dongle per controller). Note: This hub and the dongles are supposed to go away in the production version, but we couldn't get a direct answer on how that was going to happen.

The last physical connection in the system is a 3.5mm stereo jack that is also on the top-front of the headset. The jack is designed to connect the audio signal, which arrived via the HDMI cable, to a gaming style headset. If we stopped at this point, we would have a functional VR headset with usable controls and immersive audio, but that is not why this system was designed. The goal for this system is to be able to move in a VR world and manipulate VR objects.

Laser Beams & Math

This is where the really cool mathematics and laser beams come into play to create a 5m x 5m playspace. In order to determine user location, both the controllers and headset are equipped with large arrays of photodiodes.

These diodes use a laser field, created by what Valve calls Lighthouse Base Stations (LBS), and mathematics to continuously feed information to the PC about our position on the floor. The mechanical engineer working with us, Levi Miller of Valve, provided a useful analogy for how this systems works:

The lighthouse stations are like satellites in a GPS system. They are constantly sending information in the form of IR beams that are laced around the room by rotational motors that move them up and down, left and right. The other part of the "GPS" would be the controllers and the headset, which receive the signals and do the locational computation – much like the actual GPS unit on a mobile phone or vehicle. That’s the gist of how the system works.

Wireless Limits & Expanding Playspace

After using the Vive VR system, every user we spoke to had the thought of, "can we make the playable area bigger?" We explored the idea with the Valve rep and were told that, theoretically, the answer is "yes". He referred to the idea of expansion as "tiling," stating that a user could place LBSs throughout an area as long as the edges of their patterns overlapped. There are, however, limitations. Remember that we had a three part cable attached to our setup. In order to expand the useable area, the cable would have to be extended as well. Signal degradation begins to occur for high bandwidth applications on HDMI around 15 meters (see: hdmi.org). Without having some rigged ceiling setup and crazy cabling with amplifiers, that becomes a tough solution.

The next logical solution would be to make the entire headset and controller fully wireless, but in this case we run into bandwidth issues. HDMI 2.0 advertises a bandwidth of 18Gbps for video, and the more wide-spread version (1.4) gives available bandwidth of 10.2Gbps. Our current wireless standards (802.11ac) nowhere near match that. At most we can theoretically squeeze-out close to 7Gbps. There are wireless technologies in development, like 802.11ay, that offer the necessary bandwidth (something like 100Gbps), but we have to wait and see on that sort of solution.

As a final solution, others have suggested strapping a full PC to the user's back. It would have to be a relatively high-powered device to produce the graphics output, and those are usually bulkier than the average laptop. Even then, you would have heat and battery issues to overcome. It appears that, at this point in time, we are just going to have to be content with a 5m x 5m space and keep urging the developers to give us more.

Another idea of interest is using surround sound in the VR area instead of an audio headset. This could have two benefits. First, it would reduce the amount of equipment the user is wearing and make gameplay feel more natural, and it’d also allow the user to still be aware of the real world around them, in case of a more urgent event.

More HTC Vive Display, GPU, & Render Tech

If you’re still reading, you probably want to know as much as possible about how this system works -- we'll go ahead give you everything else we've got.

One of the most impressive parts of the headset is the set of lenses between your eyes and the OLED screens. To the naked eye, the lenses appear to be sets of concentric circles, but they are actually more complex.

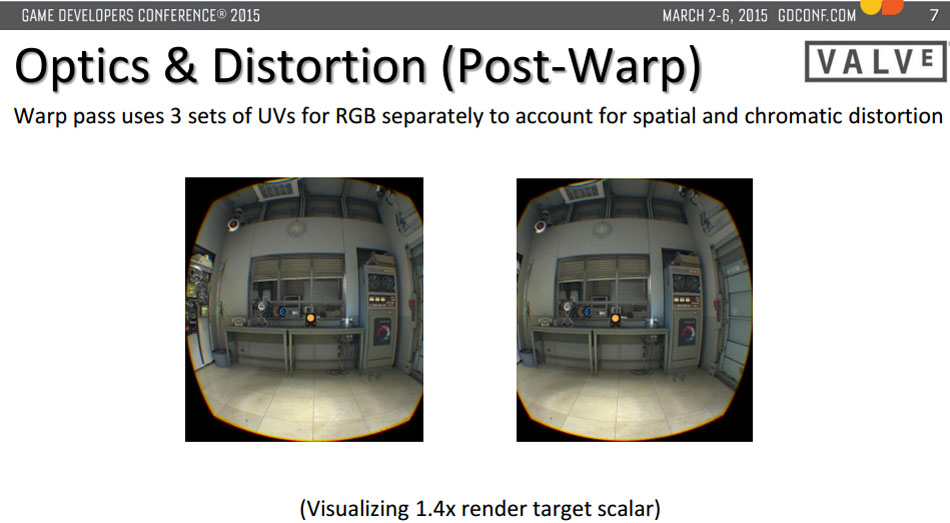

We were told that there is a full-time optics engineer who is employed by Valve to design just this sort of component and that this particular one was difficult. After speaking with nVidia’s Tom Petersen, we learned that these lenses must interpolate close-to-eye images in a fashion that creates perceived depth to the eye. The GPU renders-out a regular frame for each screen, and then subsequently renders a distorted set of images that allow the lenses, which are similar to Fresnel type lenses, to deliver the image to your eyes in a way that they can make sense of it and do so comfortably.

Another topic that could use a bit more attention is the Lighthouse Base Station design. Thanks to two motors that move the internal components, they have about a 120-degree range that allows clean projection from ceiling-to-floor or floor-to-ceiling. The positioning and projecting from the base stations helps to create a virtual wall for the user so they don't run into the actual wall. The software system that manages this virtual wall is currently being called “Chaperone.” Finally, it may be worth noting that the LBSs have a refresh rate of 60 Hz.

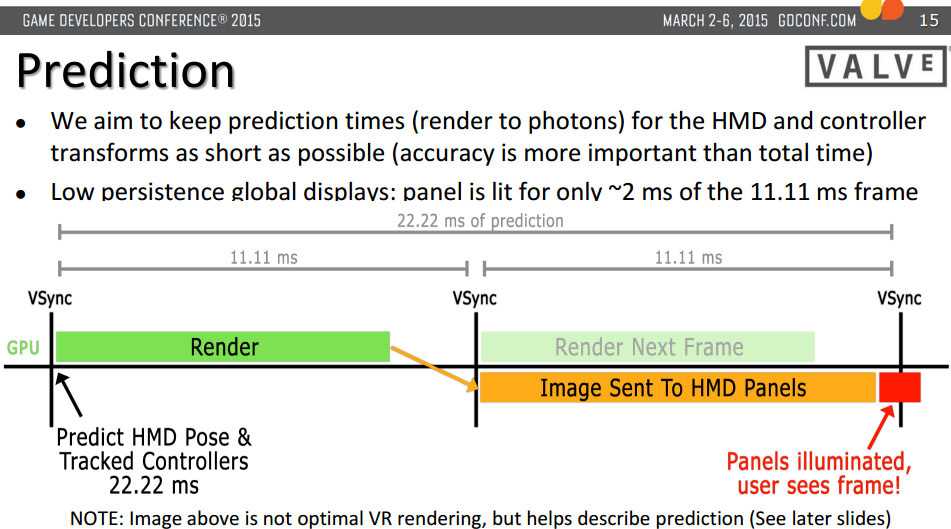

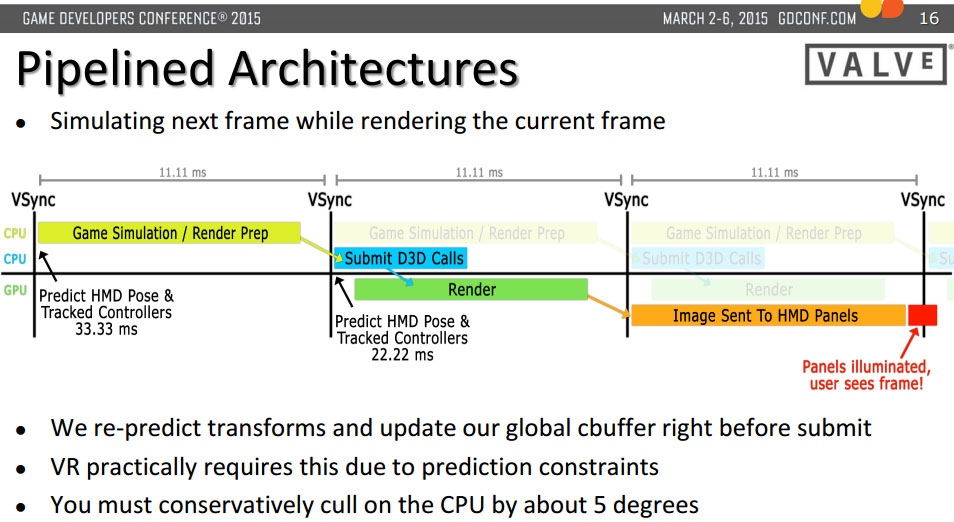

We are all interested in a smooth gaming experience and one of the biggest challenges for VR has been lag reduction. Valve/HTC wouldn't tell us everything we wanted to know about how they achieved such quick response on their current system, but they did emphasize that this is the third tracking system that they have developed. They also mentioned that optimizing the software stack produced substantial results in reducing latency.

One more interesting tidbit: We wondered why Valve, a very capable company, partnered with HTC on this venture – so we asked about it. It turns out that it's not easy to convince screen manufacturers to rearrange their assembly lines to produce a thousand screens with unusual specs for a limited run on just a single day. It is also difficult to manufacture hardware when the core of your business is software. Enter HTC. With HTC's help, Valve had better access to the materials they needed in a timelier manner, which significantly aided in reducing development time.

If you have more questions about the hardware and how the system works, please post in the comments below. We'll do our best to answer. For user impressions, check out Editor-in-Chief Steve Burke’s GDC hands-on.

- Patrick Stone.