“Team Red” appears to have been invigorated lately, inspired by unknown forces to “take software very seriously” and improve timely driver roll-outs. The company, which went about half a year without a WHQL driver from 2H14-1H15, has recently boosted game-ready drivers near launch dates, refocused on software, and is marketing its GPU strengths.

The newest video card from AMD bears the R300 series mark, from which we previously reviewed the R9 380 & R9 390 GPUs. AMD's R9 380X 4GB GPU costs $230 MSRP, but retails closer to $240 through board partners, and hosts 13% more cores than the championed R9 380 graphics card (~$200 after MIRs). That places the R9 380X in direct competition with nVidia's GTX 960 4GB, priced at roughly $230, and 2GB alternative at $210.

Today, we're reviewing the Sapphire Nitro version of AMD's R9 380X graphics card, including benchmarks from Battlefront, Black Ops III, Fallout 4, Assassin's Creed Syndicate, and more. The head-to-head would pit the R9 380X 4GB vs. the GTX 960 4GB, something we've done in-depth below. We'll go into thermals, power consumption, and overclocking on the last page.

Sapphire R9 380X 4GB Video Card Review [Video]

AMD R9 380X Specs & Sapphire Nitro R9 380X

| GamersNexus.net | AMD R9 380X | AMD R9 380 | AMD R9 390 | AMD R9 390X |

| Process | 28nm | 28nm | 28nm | 28nm |

| Stream Processors | 2048 | 1792 | 2560 | 2816 |

| Boosted Clock | 970MHz | 970MHz | 1000MHz | 1050MHz |

| COMPUTE | 3.97TFLOPs | 3.84TFLOPs | 5.1TFLOPs | 5.9TFLOPs |

| TMUs | 128 | 112 | 160 | 176 |

| Texture Fill-Rate | 124.26GT/s | 108.64GT/s | 160GT/s | 184.8GT/s |

| ROPs | 32 | 32 | 64 | 64 |

| Z/Stencil | 128 | 128 | 256 | 256 |

| Memory Configuration | 4GB GDDR5 | 2 & 4GB GDDR5 | 8GB GDDR5 | 8GB GDDR5 |

| Memory Interface | 256-bit | 256-bit | 512-bit | 512-bit |

| Memory Speed | 5.7Gbps | 5.5-5.7Gbps | 6Gbps | 6Gbps |

| Memory Bandwidth | 182.4GB/s | 182.4GB/s | 384GB/s | 384GB/s |

| Power | 2x6-pin | 2x6-pin | 1x8-pin 1x6-pin | 1x8-pin 1x6-pin |

| TDP | 190W | 190W | 275W | 275W |

| API Support | DX12, Vulkan, Mantle | DX12, Vulkan, Mantle | DX12, Vulkan, Mantle | DX12, Vulkan, Mantle |

| Launch Price | 230-$240 | $200.00 | $330.00 | $430.00 |

Note: Our R9 380X comes from Sapphire, who've overclocked the GPU core clock to 1040MHz from the reference 970MHz. The memory clock is pre-overclocked to 1500MHz against the 1425MHz reference. There is only a 4GB model of the 380X; a 2GB version does not exist.

TDP of the R9 380X rests at 190W, equivalent to the reference TDP of the R9 380, though we'll benchmark total system power draw in the final words of this review. The memory interface operates on a 256-bit wide bus and at 5.7Gbps reference speed, outputting a memory bandwidth of 182.4GB/s. Overclocked cards will slightly increase these numbers.

TMUs are the main increase over the R9 380, aside from a generally higher clockrate from AIBs. The R9 380X is outfitted with 128 texutre mapping units (+16 over the 380's 112 TMUs), but ROPs remains the same – 32 for each.

The same 28nm fab process we've come to know is still used in the R9 380X.

AMD R300 Series Architecture

AMD's using the same architecture we've seen a few times now, so no big news here. The only point that's worth reviving, though, is AMD's commitment to DirectX 12 and new APIs, like OpenGL Next (“Vulkan”). This is something we discussed with Chris Roberts of Cloud Imperium games recently, for followers of Star Citizen.

In its 380X press materials, AMD brought tessellation and tiled resource management to the forefront of discussion. With DirectX 12's takeover imminent, GCN architecture has the potential to make a stronger showing by way of its Asynchronous Compute Engines (ACE), designed for asynchronous shaders in DirectX 12. Asynchronous shaders overhaul the pipeline in a way that splices sizable workloads into multiple smaller parts. These are then processed (asynchronously, as the name would suggest) to accelerate the pipeline, ensuring that no single resource is holding-up the processing of others. Larger resources can be worked on in the background while the small stuff gets managed throughout operation.

Support of Dx12's asynchronous processing isn't new to the 380X, but is a strength to which that AMD has aptly called attention.

As stated in the initial R300 series launch, some overall changes have been made to the architecture to push this line beyond “rebrand” and into “refresh” territory. Primarily, those changes are summed-up in the form of thermal envelope reduction and a slightly bolstered clockrate over the R200 series equivalents. This makes for reasonable gains in performance when cards are coupled with stable drivers – something we didn't have for the R9 390 at launch. We'll talk about AMD's driver status further down.

Sapphire R9 380X Cooler Design

Sapphire's R9 380X uses the company's “Nitro” cooler design, introduced as a “gamer-targeted” brand alongside the R300 series. In Sapphire's vision, Nitro cards hit market close to MSRP and appeal most to gamers who don't care to do much tweaking or overclocking, not to say the cards can't overclock – we'll test that later – but they aren't targeted at overclockers.

The Sapphire R9 380X uses four copper heatpipes routed through an aluminum finned sink, fairly standard, and is fitted with two ~90mm fans for dissipation. The two fans have a sort of “cheap plastic” feel to them, but the cooler overall is relatively high-quality. This is something we discussed in the R9 380/390 Nitro reviews.

Sapphire's mounted a backplate to their 380X – with the words right-side up, even – and uses a futuristic white/black/gray color scheme. We like the overall styling of the backplate, but it is generally not of this website's interest to discuss aesthetics; we'll leave the rest to you, told through photos in the post.

Test Methodology

We tested using both of our GPU test benches, as we are in the process of merging into a single GPU test bench. Both are detailed in the tables below. Our thanks to supporting hardware vendors for supplying some of the test components.

The latest AMD Catalyst drivers (15.11.1) were used for testing, except in the case of the Fury X and R9 380/390, which were all on loan and have not been updated since ~15.7. NVidia's 359.00 drivers were used for testing the latest games. Game settings were manually controlled for the DUT. All games were run at presets defined in their respective charts. We disable brand-supported technologies in games, like The Witcher 3's HairWorks and HBAO. GRID: Autosport saw custom settings with all lighting enabled. All other game settings are defined in respective game benchmarks, which we publish separately from GPU reviews. Our test courses, in the event manual testing is executed, are also uploaded within that content. This allows others to replicate our results by studying our bench courses.

Each game was tested for 30 seconds in an identical scenario, then repeated three times for parity.

Z97 bench:

| GN Test Bench 2015 | Name | Courtesy Of | Cost |

| Video Card | This is what we're testing! | - | - |

| CPU | Intel i7-4790K CPU | CyberPower | $340 |

| Memory | 32GB 2133MHz HyperX Savage RAM | Kingston Tech. | $300 |

| Motherboard | Gigabyte Z97X Gaming G1 | GamersNexus | $285 |

| Power Supply | NZXT 1200W HALE90 V2 | NZXT | $300 |

| SSD | HyperX Predator PCI-e SSD | Kingston Tech. | TBD |

| Case | Top Deck Tech Station | GamersNexus | $250 |

| CPU Cooler | Be Quiet! Dark Rock 3 | Be Quiet! | ~$60 |

X99 Bench:

| GN Test Bench 2015 | Name | Courtesy Of | Cost |

| Video Card | This is what we're testing! | - | - |

| CPU | Intel i7-5930K CPU | iBUYPOWER | $580 |

| Memory | Kingston 16GB DDR4 Predator | Kingston Tech. | $245 |

| Motherboard | EVGA X99 Classified | GamersNexus | $365 |

| Power Supply | NZXT 1200W HALE90 V2 | NZXT | $300 |

| SSD | HyperX Savage SSD | Kingston Tech. | $130 |

| Case | Top Deck Tech Station | GamersNexus | $250 |

| CPU Cooler | NZXT Kraken X41 CLC | NZXT | $110 |

Average FPS, 1% low, and 0.1% low times are measured. We do not measure maximum or minimum FPS results as we consider these numbers to be pure outliers. Instead, we take an average of the lowest 1% of results (1% low) to show real-world, noticeable dips; we then take an average of the lowest 0.1% of results for severe spikes. Anti-Aliasing was disabled in all tests except GRID: Autosport, which looks significantly better with its default 4xMSAA, and Black Ops III. HairWorks was disabled where prevalent. Manufacturer-specific technologies were used when present (CHS, PCSS).

- Battlefront test methodology can be found here.

- Black Ops III test methodology is here.

- The Witcher 3 test methodology is here.

- Fallout 4 test methodology is here.

The Assassin's Creed: Syndicate methodology will go live shortly after this post, but to recap, we tested these settings:

- 1080p / ultra high: MSAA2X + FXAA enabled. SSAO (not HBAO+) and “High” shadows configured to ensure fair benchmarks.

- 1080p / ultra custom: FXAA instead of MSAA. SSAO. “High” shadows.

- 1440p / ultra custom: as above.

- 4K / ultra custom: as above.

Overclocking was performed incrementally using MSI Afterburner. Parity of overclocks was checked using GPU-Z. Overclocks were applied and tested for five minutes at a time and, if the test passed, would be incremented to the next step. Once a failure was provoked or instability found -- either through flickering / artifacts or through a driver failure -- we stepped-down the OC and ran a 30-minute endurance test using 3DMark's FireStrike Extreme on loop (GFX test 2).

Thermals and power draw were both measured using our secondary test bench, which we reserve for this purpose. The bench uses the below components. Thermals are measured using AIDA64. We execute an in-house automated script to ensure identical start and end times for the test. 3DMark FireStrike Extreme is executed on loop for 25 minutes and logged. Parity is checked with GPU-Z.

Thermals, power, and overclocking were all conducted on the Z97 bench above.

Video Cards Tested

- MSI R9 390X Gaming 8GB ($430)

- Sapphire R9 390 ($340)

- Sapphire R9 380 ($220)

- PowerColor R9 290X 4GB (Deprecated)

- XFX R9 285 2GB ($206)

- AMD R9 270X 2GB Reference (Deprecated)

- ASUS R7 250X 1GB ($117)

- NVidia GTX 980 Ti 6GB Reference (& SLI) ($660)

- MSI GTX 980 4GB Gaming ($515)

- EVGA GTX 970 SSC 4GB ($315)

- EVGA GTX 960 SSC 4GB ($225)

- ASUS GTX 960 Strix 2GB ($200)

- ASUS GTX 950 Strix 2GB ($170)

- EVGA GTX 750 Ti 2GB ($125)

- GTX 770 (Deprecated)

AMD Driver Stabilization

Shortly after the Fury X launched, and our subsequent review went live along with all the others, AMD seemed to get more serious about driver support. The company has picked-up its game-specific drivers – though still a little slow at times, like with Fallout 4 – and just recently emphasized a plan to deliver six WHQL drivers per year. This announcement, alongside the Radeon Software update (same link as the previous), shows a renewed commitment to software support.

As we ran the cards through the bench in this review, we encountered zero visible issues (flickering, black screens, crashes) attributable to AMD's drivers or software. This is huge, as that's the first time I've written those words in a recent AMD GPU review. The drivers are stabilizing. Speed needs to be worked on a bit still – AMD should push harder for day-one (or day-zero, really) readiness – but it's getting close to that target.

We commend AMD for finally getting its priorities straight and working on its software-driver distribution.

X99 Benchmarks – Newer Games, but No R9 380 or R9 390

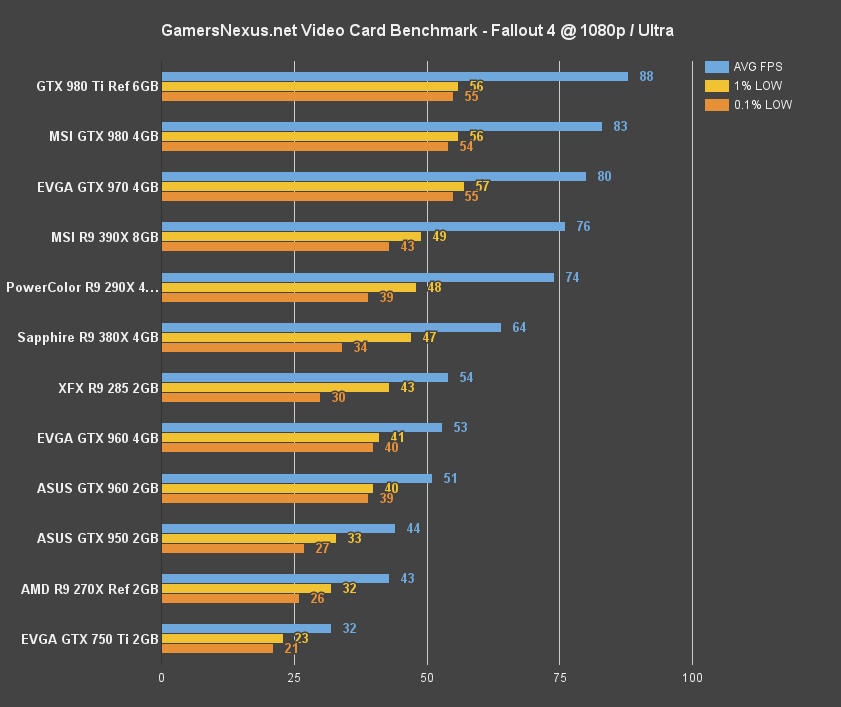

This was discussed in test methodology, but it's worth bringing up again here: We're between two benches right now, transitioning, but we wanted to bring you all the latest game benchmarks. That includes Assassin's Creed: Syndicate (launched today) and Fallout 4, both tested on our X99 platform. The rest of the games were tested on the Z97 platform.

Fallout 4 Benchmark - AMD R9 380X vs. GTX 960, GTX 970

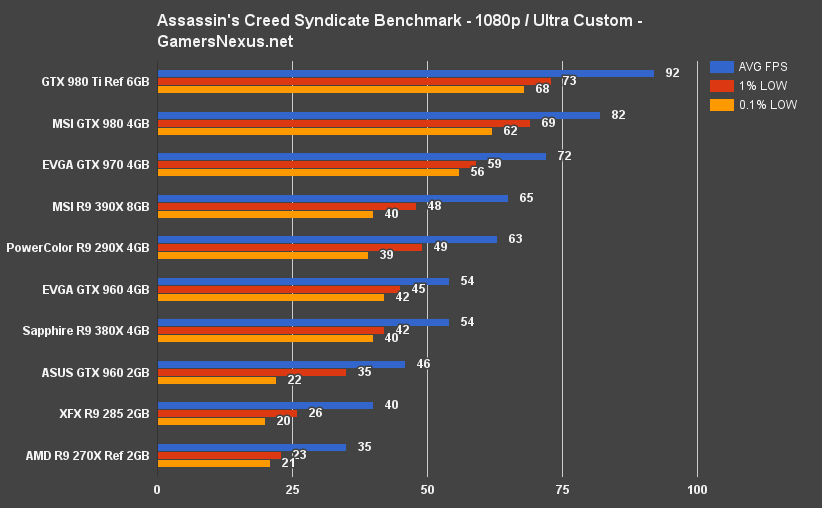

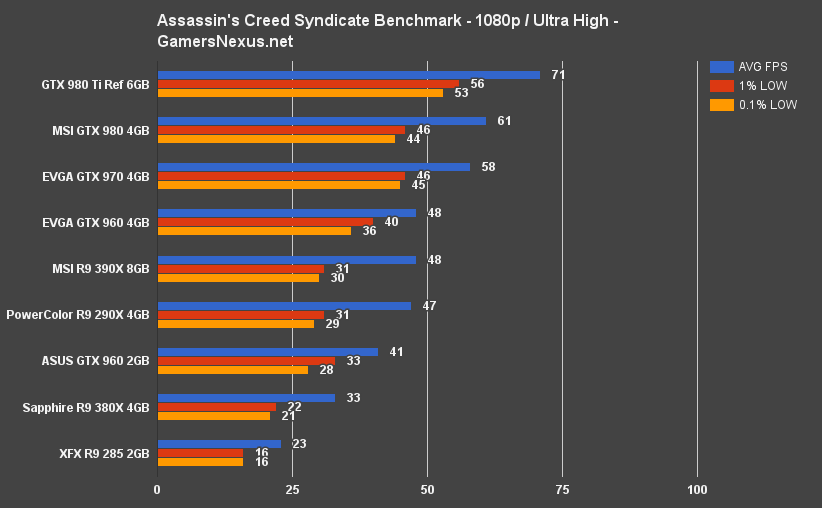

Assassin's Creed Syndicate Benchmark - AMD R9 380X vs. GTX 960, GTX 970

See the methodology page to understand what our settings were and why we made the listed changes.

In AC: Syndicate, 1080p at “Ultra Custom” pushes the 380X ($230) to about 54FPS average, with reasonable 1% and 0.1% lows at 42 and 40FPS, respectively. The GTX 960 4GB ($230) card, AMD's price-direct competition, sits at 53FPS AVG and 46/42FPS lows. A tight race that ends with AMD about 1.87% ahead. Outside of margin of error, but not by much.

Assassin's Creed Syndicate, as you'll find in our next article – publishing almost immediately after this one – is interesting primarily for its disparity shown between 2GB and 4GB cards. The GTX 960 4GB and GTX 960 2GB are devices we've previously shown to output largely disparate performance in the Assassin's Creed series, but we also noted that some games simply don't care about the extra 2GB VRAM. For the most part, the cards perform nearly identically. A few shining stars, like Assassin's Creed Syndicate and Unity, show a massive performance delta between the two (16%, in this case). The 2GB GTX 960, then, gets thoroughly trounced by the 4GB R9 380X and 4GB GTX 960.

The R9 380X retains a lead through 1440p, but it's sort of irrelevant – 38FPS and ~27FPS lows don't exactly make an enjoyable experience.

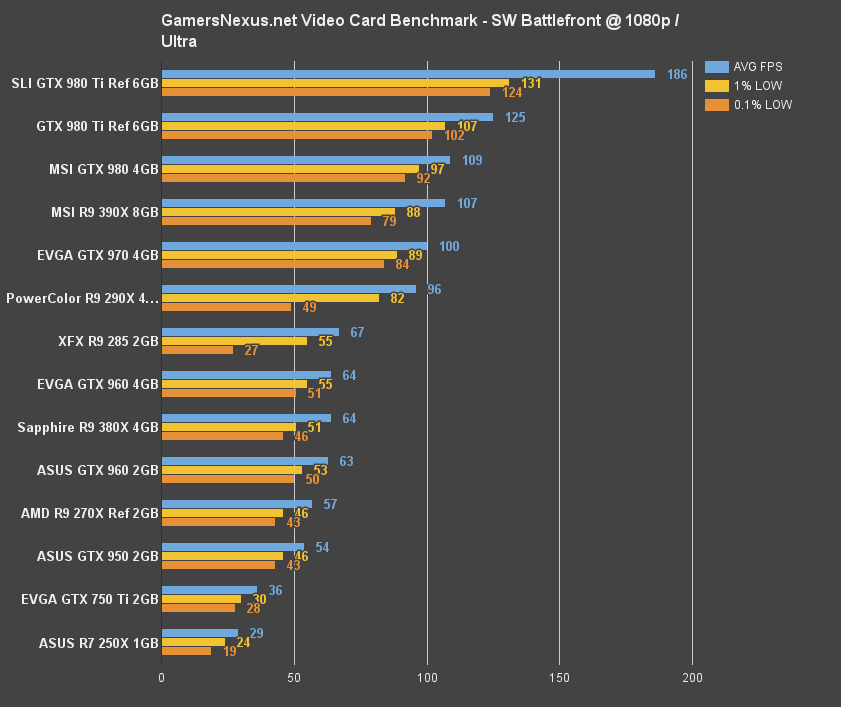

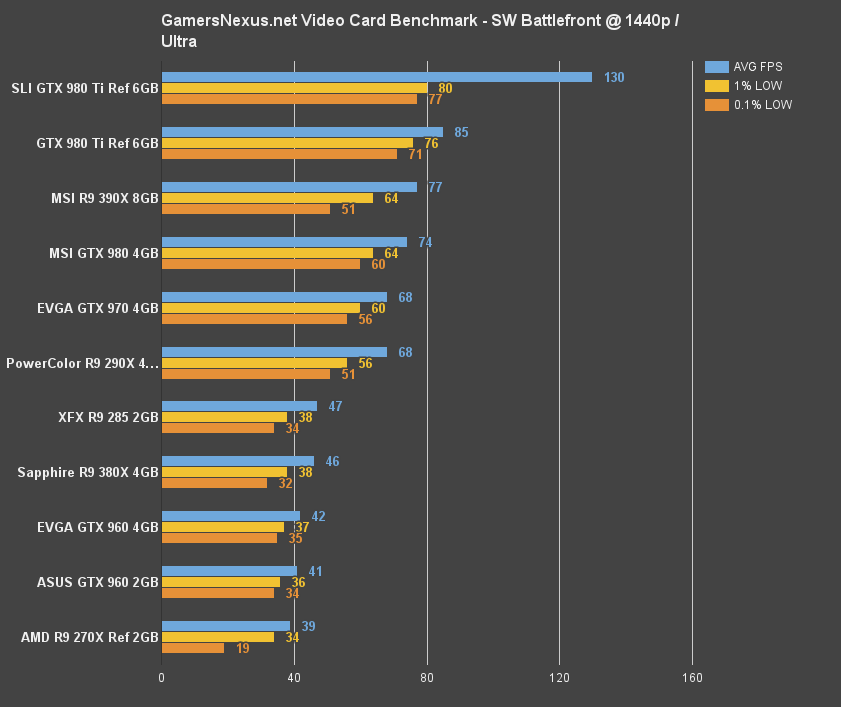

Star Wars Battlefront Benchmark - AMD R9 380X vs. GTX 960 2GB, 4GB, etc.

The GTX 960 cards seem about tied with the R9 380X at 1080p Ultra in Star Wars Battlefront, with all three devices pulling a decent framerate for the multiplayer FPS:

Z97 Benchmarks

Here's the platform we're transitioning to other tests, but still use for some of the “core” benchmarks.

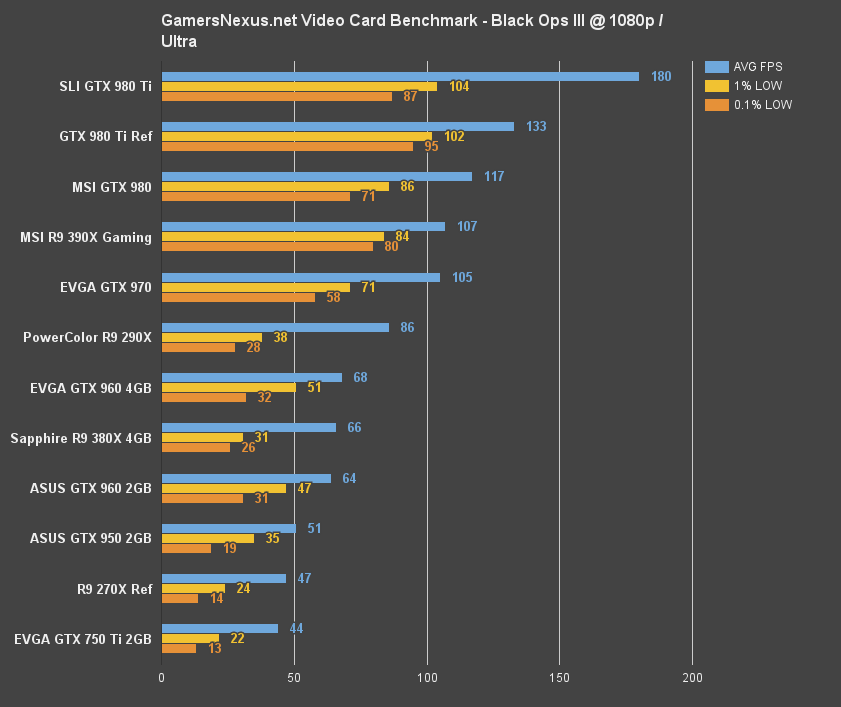

COD: Black Ops III Benchmark - AMD R9 380X vs. 390X, GTX 960, GTX 970

First off, check our Black Ops III optimization guide to fully understand all of the graphics settings.

At 1080p / ultra, AMD sits at a playable 66FPS average (though has somewhat harsh lows). The R9 380X is flanked the GTX 960 cards, the 4GB model outperforming by 2FPS AVG (2.99%) and the 2GB model underperforming by 2FPS (~3%).

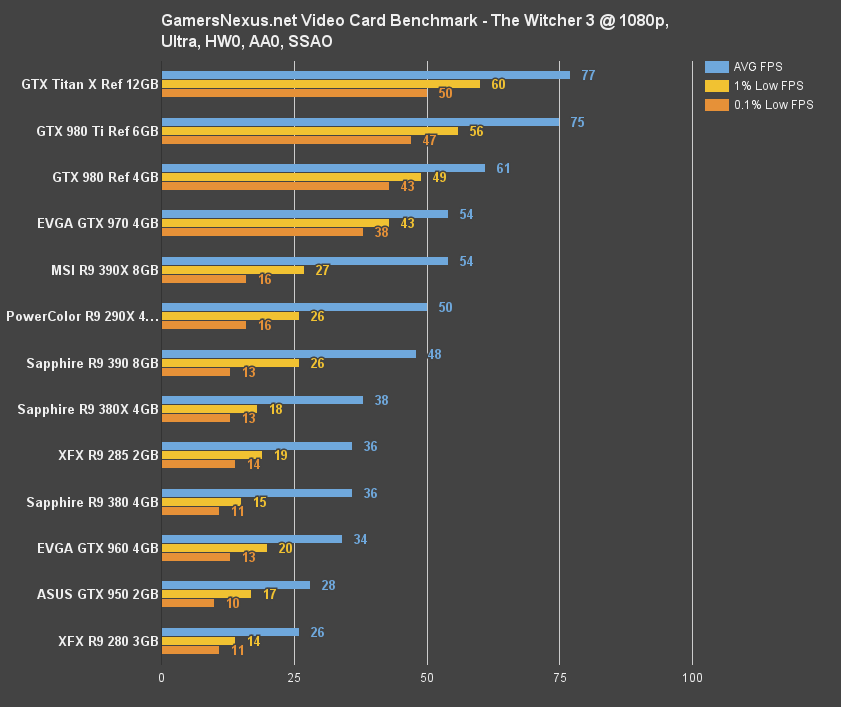

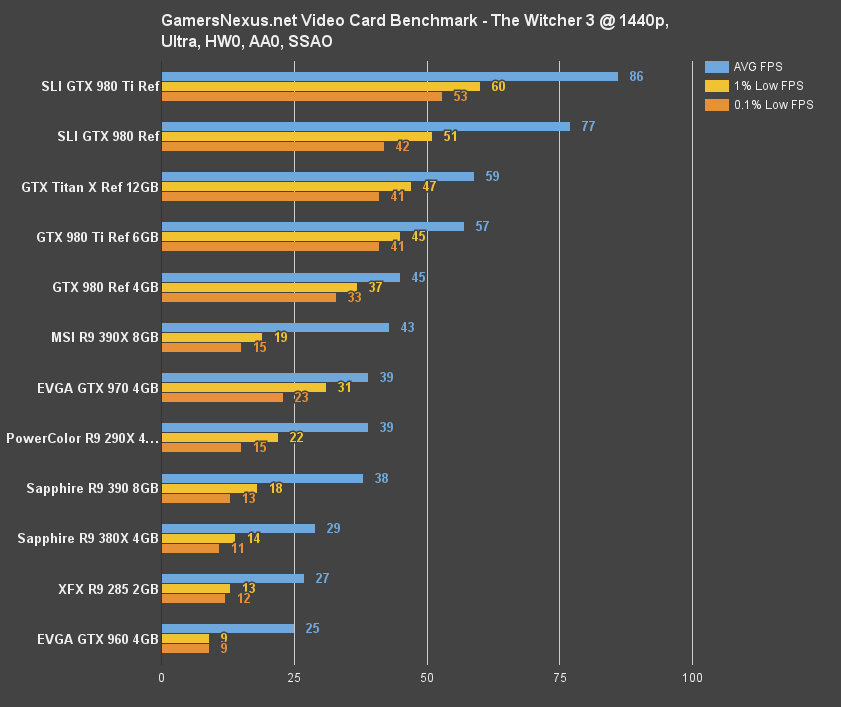

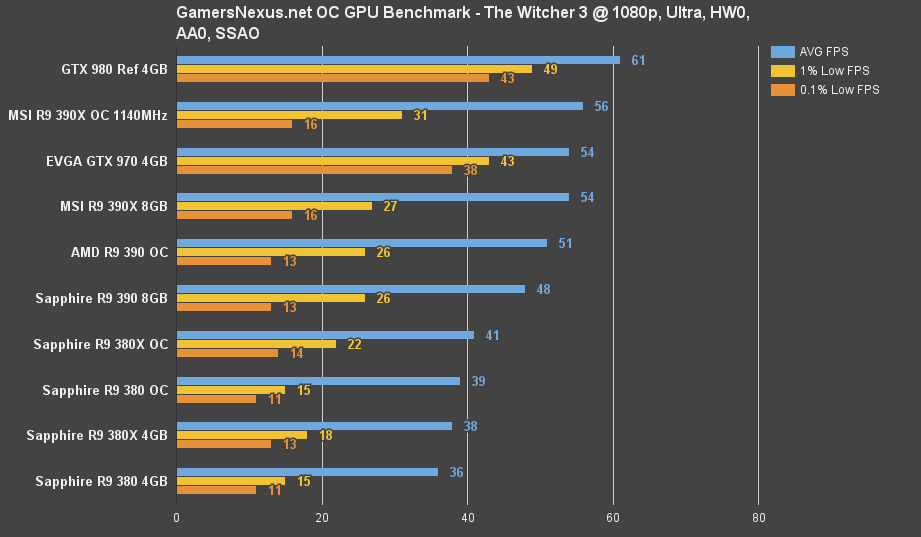

The Witcher 3 Benchmark - AMD R9 380X vs. R9 390, R9 380, GTX 960, GTX 970

Running the Witcher 3 at 1080p / ultra, with modifications mentioned in the methodology, the R9 380X pushes to within 23% of the R9 390 ($320), but only sits 2FPS (5.4%) above the R9 380 ($200). The GTX 960 rests at 34FPS. Lower settings are required for playability, though the 380X sits in the lead here.

1440p performance is no good, for the most part. You're generally not going to be using the 380X for 1440p or 4K gaming (and still have high settings) -- it's just too much for the 1080-focused card to handle.

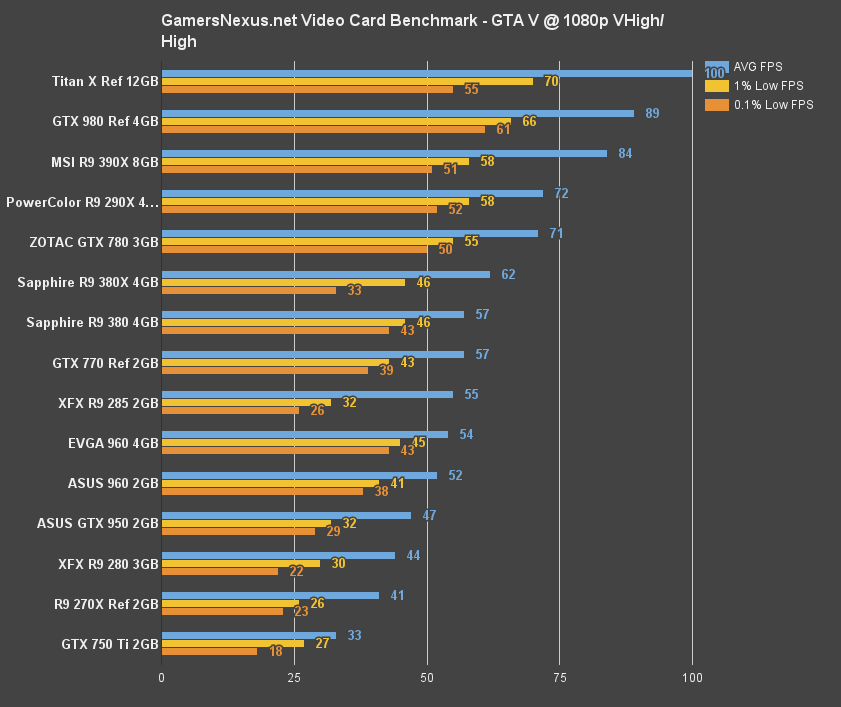

GTA V Benchmark - AMD R9 380X vs. R9 390, R9 380, GTX 960, GTX 970

GTA V shows one of the biggest gaps between the R9 380X and the GTX 960 4GB. With a 63FPS vs. 54FPS ranking (380X vs. 960, respectively), a ~13% margin appears that heavily favors AMD. The 380, at 57FPS, is about 8.4% behind the R9 380X.

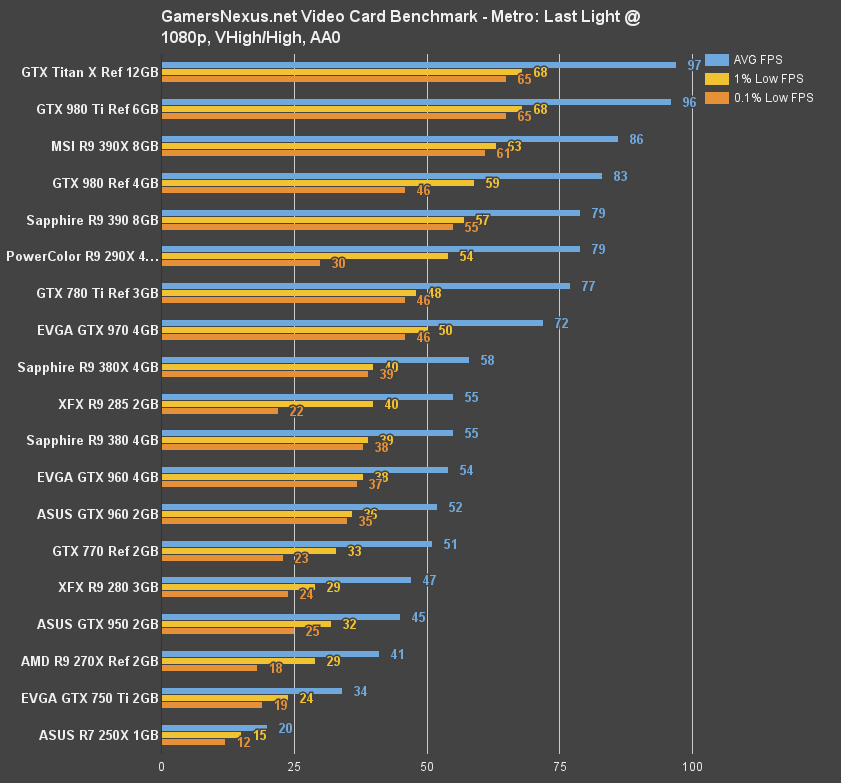

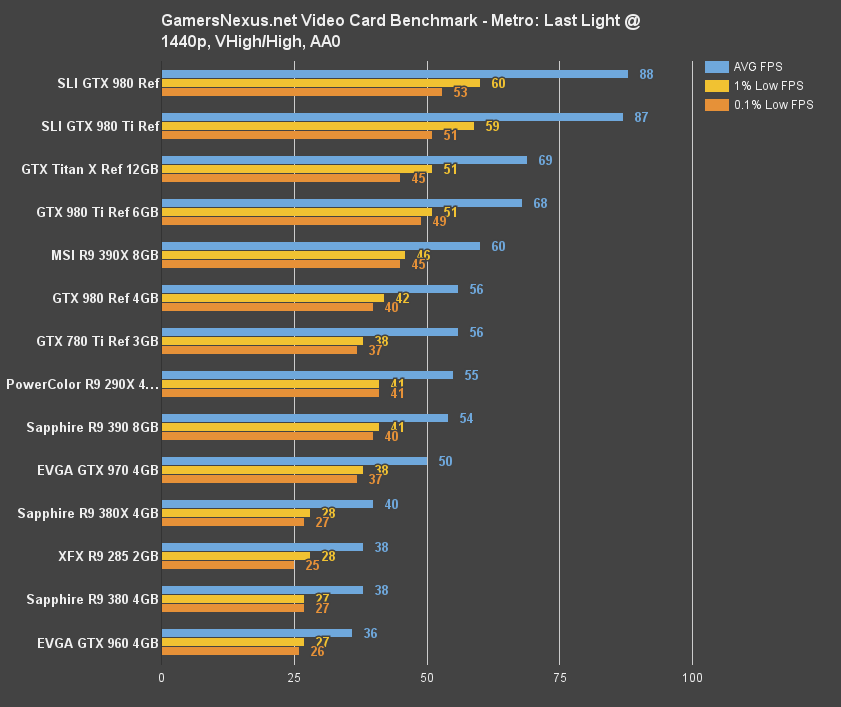

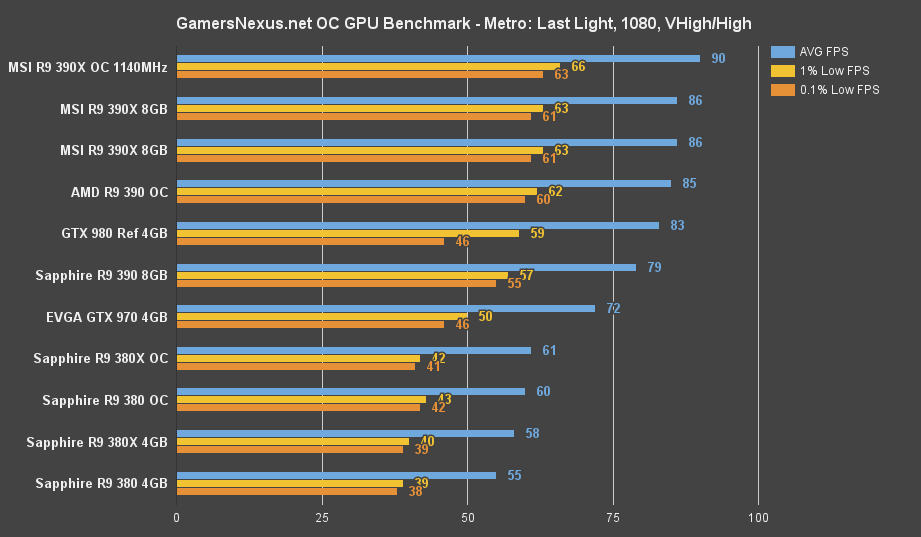

Metro: Last Light Benchmark - AMD R9 380X vs. R9 390, R9 380, GTX 960, GTX 970

The 380X maintains its ~5% lead over the R9 380 – not really an impressive jump for ~$30-$40, but that's enough to keep the 380X ahead of the GTX 960 cards. Metro: Last Light is getting a little long in the tooth these days, but still offers an extremely reliable benchmark with near-perfect reproduction of results.

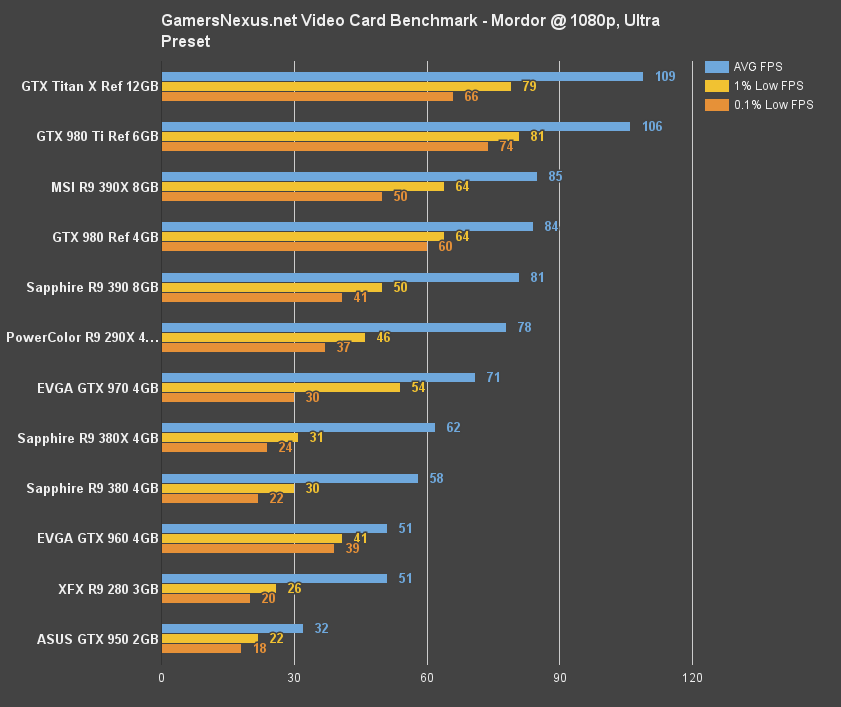

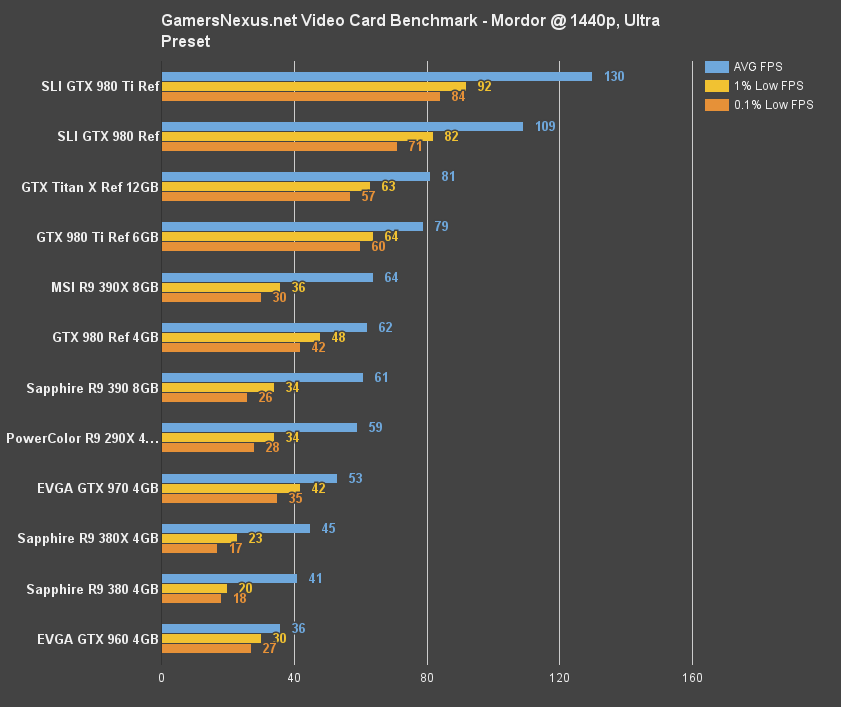

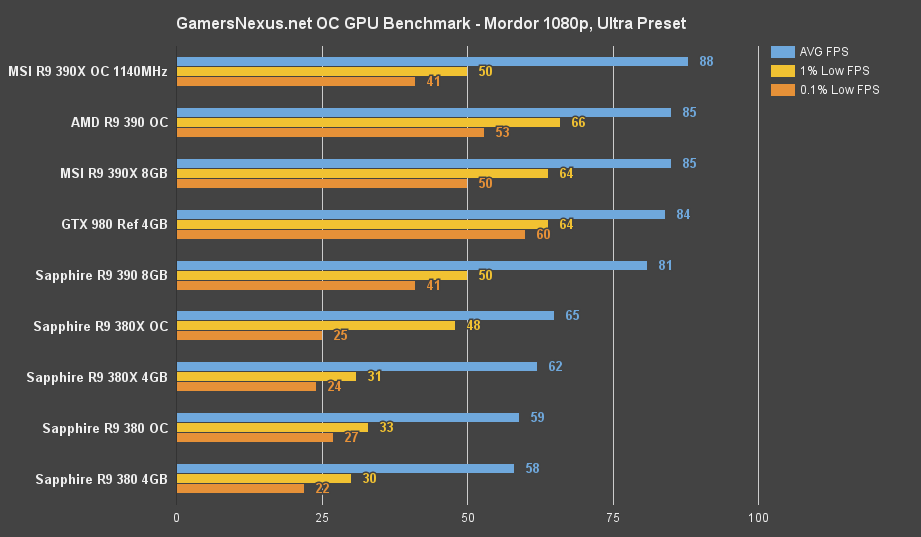

Shadow of Mordor Benchmark - AMD R9 380X vs. R9 390, R9 380, GTX 960, GTX 970

Shadow of Mordor plants the 380X ~6.7% ahead of the R9 380, and a staggering 19% ahead of the GTX 960's 51FPS. Note well, though, that the GTX 960 maintains significantly stronger 1% and 0.1% lows that will improve smoothness of frame delivery; AMD has work to do in this department. Granted, 62FPS over 51FPS is a large gap.

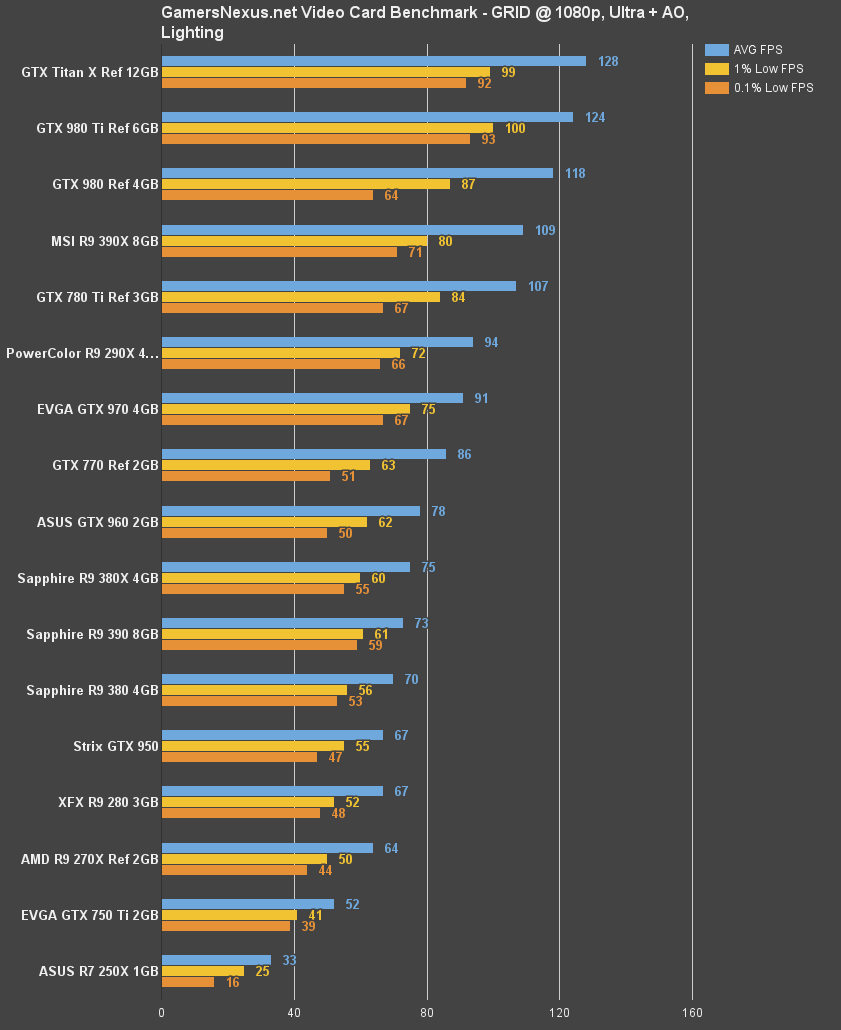

GRID: Autosport - AMD R9 380X vs. R9 390, R9 380, GTX 960, GTX 970

Talk about long in the tooth, but we're still using GRID for now – it's easy to run and reliable.

The R9 380X is 6.9% ahead of the R9 380 here, and 3.9% behind the GTX 960 (only 2GB shown). Blows get traded between the 380X and 960 on some benchmarks.

Let's move on to power, thermals, overclocking, and the conclusion.

AMD R9 380X Temperatures & Power Consumption

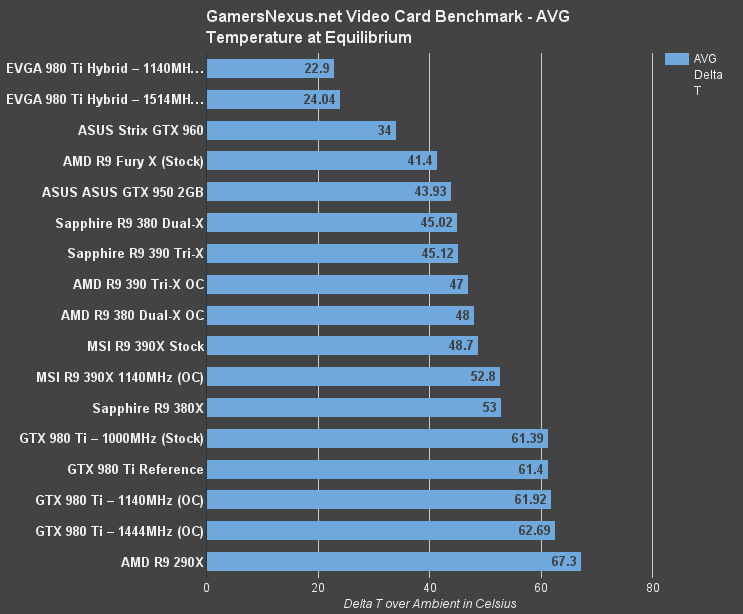

The R9 380X operates at 53C delta T over Ambient, measured once equilibrium is achieved. Comparatively, the R9 380 “Dual-X” (also from Sapphire) ran a few Celsius cooler, at 45C. These temperatures are totally contingent on the AIB, some of whom implement vastly superior cooling technologies than their competition – so to truly compare cross-brand models would require more cards than we've got access to. The GTX 960, generally, will perform cooler than the R9 380X, if for no other reason than lower power consumption.

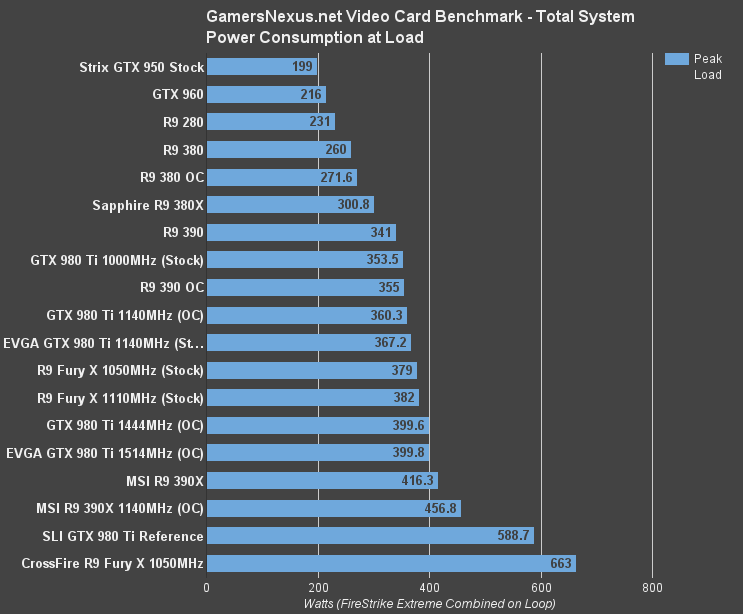

Total system power consumption lands the R9 380X configuration at 300.8W, about 40W below the R9 390, ~29W more than the overclocked R9 380, and 40W more than the R9 380 stock. The ASUS GTX 960 we tested runs at about 216W, a marked 84.8W.

AMD R9 380X Overclocking Results

For overclocking, we configure the power percent target to its maximum value before adjusting voltage to its own corresponding outputs. We avoid maxing-out voltage where possible, as it eats into total TDP available to the card's clocks. We then slowly increment clockrate, observing for visual artifacting or catastrophic failures throughout the process. Each increment is left only for a few minutes before moving to the next step. We're eventually confronted with a driver failure, at which point the clockrate is backed-down, then endurance tested for 25 minutes using 3DMark Firestrike Extreme on loop.

With the R9 380X, we presently have no voltage control, and so left voltage untouched. The R9 380X allows 120% of base power to be supplied to the GPU for overclocking, utilized alongside the clock stepping shown below:

| AMD R9 380X Overclock Stepping - GamersNexus.net | ||||||

| CLK Offset | Max CLK | Mem Offset | Mem CLK | PWR Offset | Initial Test | Endurance? |

| 0 | 1040 | 0 | 1500 | 0 | Y | - |

| 50 | 1090 | 0 | 1500 | 20 | Y | - |

| 80 | 1120 | 0 | 1500 | 20 | Y | - |

| 105 | 1145 | 0 | 1500 | 20 | Y | - |

| 105 | 1145 | 50 | 1550 | 20 | Y | - |

| 105 | 1145 | 75 | 1575 | 20 | Y | - |

| 120 | 1200 | 75 | 1575 | 20 | N | - |

| 105 | 1145 | 75 | 1575 | 20 | N | - |

| 90 | 1130 | 50 | 1550 | 20 | Y | N |

| 80 | 1120 | 50 | 1550 | 20 | Y | Y |

| 80 | 1120 | 75 | 1575 | 20 | Y | N |

| 85 | 1125 | 50 | 1550 | 20 | Y | Y |

We had to revisit a few CLK Offsets after spotty stability, eventually settling on +85MHz (1125MHz max) core clock and +50MHz memory offset (1550MHz).

AMD R9 380X Overclocking Benchmarks

The Witcher 3 sees a small FPS gain following the overclock, calculated-out as about 7.59%. Not bad, but certainly limited. That growth shrinks to 5% with Metro: Last Light and 4.7% with Shadow of Mordor.

Overclocking, as we said in the Fury X and R9 380/390 reviews, is simply not worth it. You get a ~7% clock OC, at best, and between 5% and 8% FPS boost. That comes down to a couple FPS, something that isn't worth the stability / longevity threat and increased heat output.

Conclusion

AMD's R9 380X is a card we feel confident in recommending. The 380X features known architecture, so it is a bit boring in that department, but the better-late driver stability improvements make the 380X a serious challenger to the 960. In this arena, the R9 380X wins-out in enough games – and sometimes by big margins, though generally closer to ~5-10% – that it's not another case of “AMD comes close.” No, with the 380X, AMD actually does beat the competition's pure FPS output in a number of games. Black Ops III shows the 960 4GB slightly ahead, but GTA V and the many others above do favor the 380X.

We've got to give nVidia credit where due, of course: The GTX 960 is still ~85W slimmer than the R9 380X, runs cooler, and does have better tools available to owners with GFE and ShadowPlay. AMD's Raptr Gaming Evolved software is, frankly, abysmal in its functionality, interface, and performance. We remove it on all AMD PCs we build. Speaking personally, I used to vehemently stand against GeForce Experience, too; I saw it as unnecessary bloat, of the mind that “I'll set my own settings like we did back in my time, gorrammit!” I still do that, since I can do a better job than GFE and enjoy the process of settings management, but the suite does have some useful tools for gamers. AMD's Radeon Software suite does look promising in this regard. It's certainly cleaner, but I'm hoping that the Gaming Evolved software gets a rework in the future.

Regardless, supporting, non-driver software often feels inconsequential to those seeking pure FPS, power, or overclocking advantages. If you want more OC headroom, the way to go is nVidia. 7% of max headroom isn't particularly exciting and hardly feels worth it. If you want thermals and power draw, go nVidia. If you want raw FPS out-of-box, it's looking like the 380X is a winner at the price-point. It's less impressive in some games, like those where the R9 380 is just a few percentage points behind the 380X, but the R9 380 tends to land at $200 to $220, depending on if you count rebates as a price reduction, so that's not a terrible difference toward the high-end of the spectrum.

There are valid reasons to buy both cards. The 380X does not invalidate the 960, but it does give buyers something to seriously think about. Some games, as we saw in GTA V and Shadow of Mordor, do see an actually-visible impact on framerate that favors the 380X.

AMD did good work on this one.

If you like our content, please consider supporting us through Patreon.

Editorial, Team Lead: Steve “Lelldorianx” Burke.

Additional Validation Testing: Mike “Budekai” Gaglione.

B-roll, Video Editing: Keegan “HornetSting” Gallick.

(Huge thanks to my team for helping me crunch this and the AC Syndicate content).