NVIDIA GeForce GTX 980 Maxwell GPU Benchmark vs. 780 Ti, Others & Architecture Drill-Down

Posted on

It’s been a months-long journey of GTX 800, then GTX 900 rumors, broken embargoes, questions, and anticipation. The GTX 750 Ti saw the debut of NVidia’s Maxwell architecture almost 7 months ago, making for one of the first times the company has ever unveiled a low-end product before its architecture flagship. Then things went silent. Time passed, and as mobile 800-series GPUs began shipping, we still hadn’t heard about what would eventually become the GTX 900 series.

Then a box showed up.

“The World’s Most Advanced GPU” was written on the hefty black and green box, a few phone calls were made, and we knew it was time.

Maxwell sees significant architectural changes over its preceding Kepler and Fermi family members and will inevitably lead into Pascal, detailed here. You’ll see a lot of new buzzwords around the web today, the embargo now lifted, and we’ll go into detail on what each of these technologies means for the gaming audience. Among others, some key advancements include: Multi-Frame Sampled Anti-Aliasing (MFAA), Dynamic Super Resolution (DSR), VXGI (Voxel Global Illumination), third generation delta color compression, and VR Direct.

Today we're reviewing nVidia's GTX 980 and Maxwell architecture, benchmarking for gaming performance / FPS, and looking at the TDP and power features.

A quick note: Although this review was written prior to today, we will be at nVidia’s Santa Monica-based Game24 unveil event on Thursday, 9/18 from 6PM through midnight. Embargo lifted at 7:30PM PST during the event, so there may be additional event-based information published following this review. Subscribe to our Twitter, Facebook, YouTube, and home pages for all of that.

NVIDIA GeForce GTX 980 Benchmark & Specs Explanation Video

NVIDIA GeForce GTX 980 & 970 Video Card Specs

| GTX 980 | GTX 970 | GTX 780 Ti | |

| GPU | GM204 | GM204 | GK-110 |

| Fab Process | 28nm | 28nm | 28nm |

| Texture Filter Rate (Bilinear) | 144.1GT/s | 109.2GT/s | 210GT/s |

| TjMax | 95C | 95C | 95C |

| Transistor Count | 5.2B | 5.2B | 7.1B |

| ROPs | 64 | 64 | 48 |

| TMUs | 128 | 104 | 240 |

| CUDA Cores | 2048 | 1664 | 2880 |

| BCLK | 1126MHz | 1050MHz | 875MHz |

| Boost CLK | 1216MHz | 1178MHz | 928MHz |

| Single Precision | 5TFLOPs | 4TFLOPs | 5TFLOPs |

| Mem Config | 4GB / 256-bit | 4GB / 256-bit | 3GB / 384-bit |

| Mem Bandwidth | 224GB/s | 224GB/s | 336GB/s |

| Mem Speed | 7Gbps (9Gbps effective - read below) | 7Gbps (9Gbps effective) | 7Gbps |

| Power | 2x6-pin | 2x6-pin | 1x6-pin 1x8-pin |

| TDP | 165W | 145W | 250W |

| Output | DL-DVI HDMI 2.0 3xDisplayPort 1.2 | DL-DVI HDMI 2.0 3xDisplayPort 1.2 | 1xDVI-D 1xDVI-I 1xDisplayPort 1xHDMI |

| MSRP | $550 | $330 | $600 |

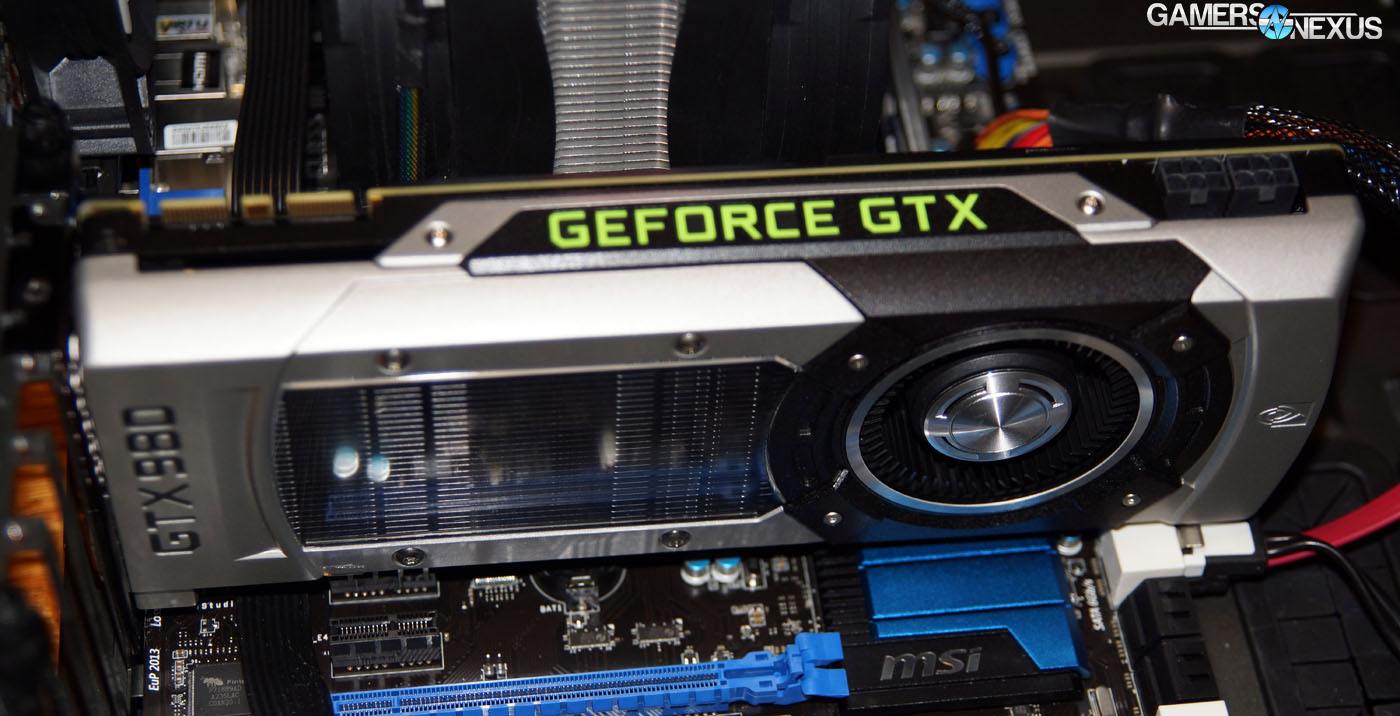

The GTX 980 sports a new GPU – one that nVidia boasts is “easily the most advanced GPU ever made” – in the form of the GM204 semiconductor. During our meetings with various nVidia engineers and product managers, we learned that the GTX 980’s development saw continued focus on mitigating temperatures to allow for greater thermal headroom, aiding in the performance improvement. This is made clear by the 980’s somewhat impressive 165W TDP and the 970’s 145W TDP; for perspective, the previous-generation GTX 780 Ti sits at around 250W TDP, demanding 1 x 6-pin + 1 x 8-pin power connectors from the PSU.

The GTX 970’s TDP in particular is worthy of note: A single 6-pin power cable can support up to 150W of power given a reasonably-classed PSU, and although nVidia is shipping its reference 970 with two 6-pin connectors for safety in overclocking, there is single-header potential. 5W isn’t enough headroom for overclocking, though if a board partner wanted to build a lower power GTX 970 for more discreet HTPC / living room applications (with limited or no OC support), the TDP would theoretically allow for a single 6-pin header.

Because the watt draw directly impacts thermals in a GPU (though there are many other factors – like the cooling interface, DRAM, and VRM), we can expect more horsepower at a lower temperature and power bill. The GTX 980 and accompanying GM204 chip have about 2x the performance per Watt over Kepler. A 165W TDP on a flagship GPU is undoubtedly nVidia's way of kicking AMD where it hurts most.

The GTX 980’s major advancements have been in core performance (each core is approximately 40% more powerful than the CUDA cores used in Kepler devices), TDP, and underlying technology to improve gaming visuals. Note that although the GTX 980 and its accompanying GM204 chip may host fewer cores, they can still output better performance core-for-core in tuned gaming and high-end CUDA environments.

NVIDIA GeForce GTX 980 – Changes to Thermal Design and TDP

We’ve seen SLI creep ahead in marketing focus with each driver update and conference call for about a year now. NVidia wants SLI to grow in adoption among gamers and has been making sweeping driver performance tweaks to enable this, though there have always been concerns about multi-GPU thermals in adjacent configurations.

When in tri-SLI (cards forced adjacent) or dual adjacent SLI using cards equipped with backplates, we’ve seen significantly increased temperatures as a result of the backplate cutting into the airflow channel for the top and middle GPUs. This results in an undesirable thermal differential between the two devices, with the slot-1 device running notably hotter. NVidia has historically avoided the usage of a backplate due to these concerns, but that’s changing with the GTX 980.

The GTX 980 ships stock with a full-coverage backplate to improve PCB strength (reduce flexing) and the overall appearance of the card. To resolve thermal concerns in multi-GPU configurations, the backplate is slotted toward the rear-top of the device (the side opposing the top of the fan); users building with adjacent video cards can use a standard screwdriver to remove the small slotted portion, leaving the majority of the backplate in-tact while addressing heat concerns for the cornered card. This opens the airflow channel enough to produce what nVidia qualifies as good cooling performance.

We’ll test the efficacy of the backplate design as soon as we’re able to obtain a second GTX 980 for testing.

Continue to Page 2 for information on Maxwell's new technologies, including advanced color compression, memory architecture, 4K resolution scaling, and more.

Maxwell Memory Architecture: Third-Generation Delta Color Compression

This bit sounds pretty fancy.

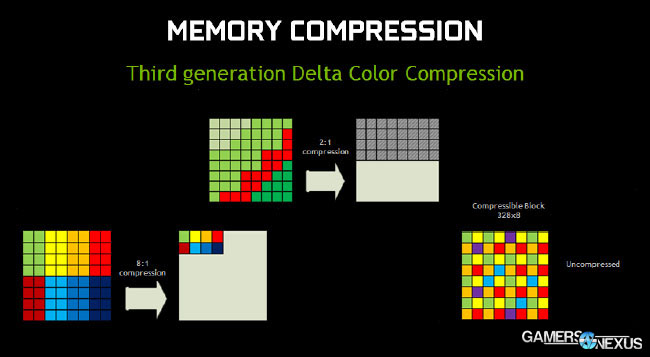

Delta color compression is a specific process used when compressing data transferred to and from the framebuffer (GPU memory). Bandwidth is not “free;” as with all components, it’s critical to performance to ensure data is compressed to make the best use of buses, hopefully in a lossless fashion so that quality is not degraded.

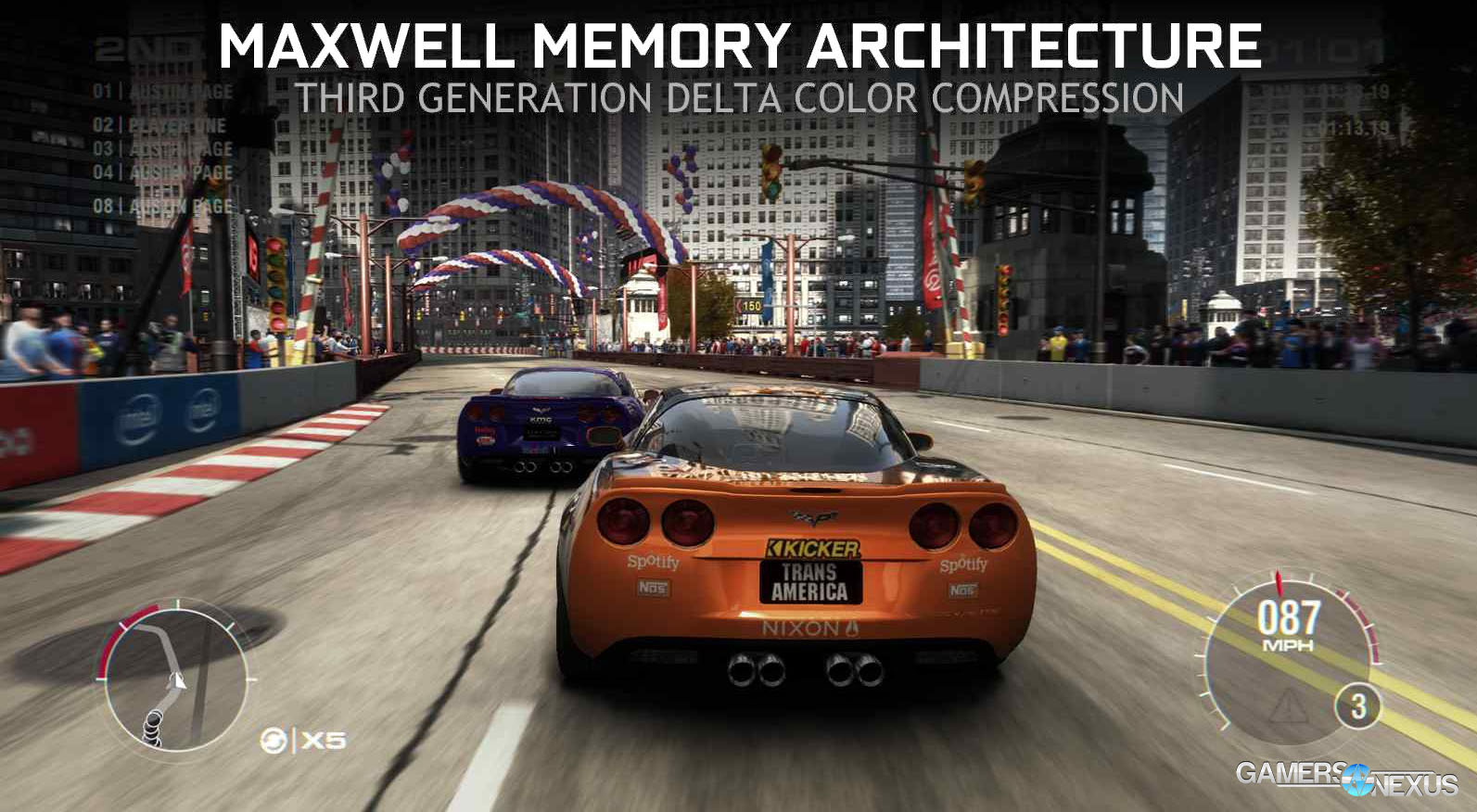

Delta color compression improves memory efficiency through new compression technology. By compressing the data more heavily, less bandwidth is required and more data can be crammed down the pipe, ultimately resulting in a better-looking experience. Let’s take an example from GRID: Autosport, which we’ve benchmarked in the past:

This frame (n) has already been rendered by the GPU. The GPU already knows what’s present in the frame (having just drawn it) and can use this information when analyzing the next frame (n+1) in the gameplay sequence. Instead of drawing the absolute color values all over again in the next frame, we can use color compression to look at the delta (change) between values in each frame. The GPU looks at the next frame in the sequence and, instead of seeing our above image, sees something more like this:

The pink highlight shows the change in color between frames – either an object was moving too much, visually changed in appearance, or was granular enough to require additional work by the GPU, with each of these showing in non-highlighted appearance.

To recap: Analyzing the color change between successive frames minimizes demand on the GPU by avoiding exact (absolute) color value draws, instead opting for value change from base. NVidia’s whitepaper indicates that this process reduces bandwidth saturation by 17-18% on average, meaning we’ve got more memory bandwidth freed-up for other tasks. This also contributes to the effective memory speed: Although specified at 7Gbps GDDR5 memory speed, the GTX 980, 970, and other similarly-equipped Maxwell devices will perform equivalently to 9Gbps effective throughput, from what we’re told by nVidia.

In shorter form, you’d need to run DRAM at 9Gbps on Kepler in order to achieve the same effective bandwidth as 7Gbps on Maxwell.

Maxwell Technology: Multi-Frame Sampled Anti-Aliasing (What Is MFAA?)

MFAA is a new approach to the sampling patterns applied by the GPU when using anti-aliasing. The short of it is that higher anti-aliasing sample values are achievable with a significantly diminished impact to performance when compared to previous AA methodologies. For purposes of this review, nVidia was not able to fully launch MFAA in the GTX 980 driver package and supported games, so we’ll focus on overviewing the technology and showing a few prepared examples.

Performance tests will be conducted as soon as we receive the green-light from nVidia regarding stability of MFAA. We expect to have something more detailed online upon returning from Game24 next week.

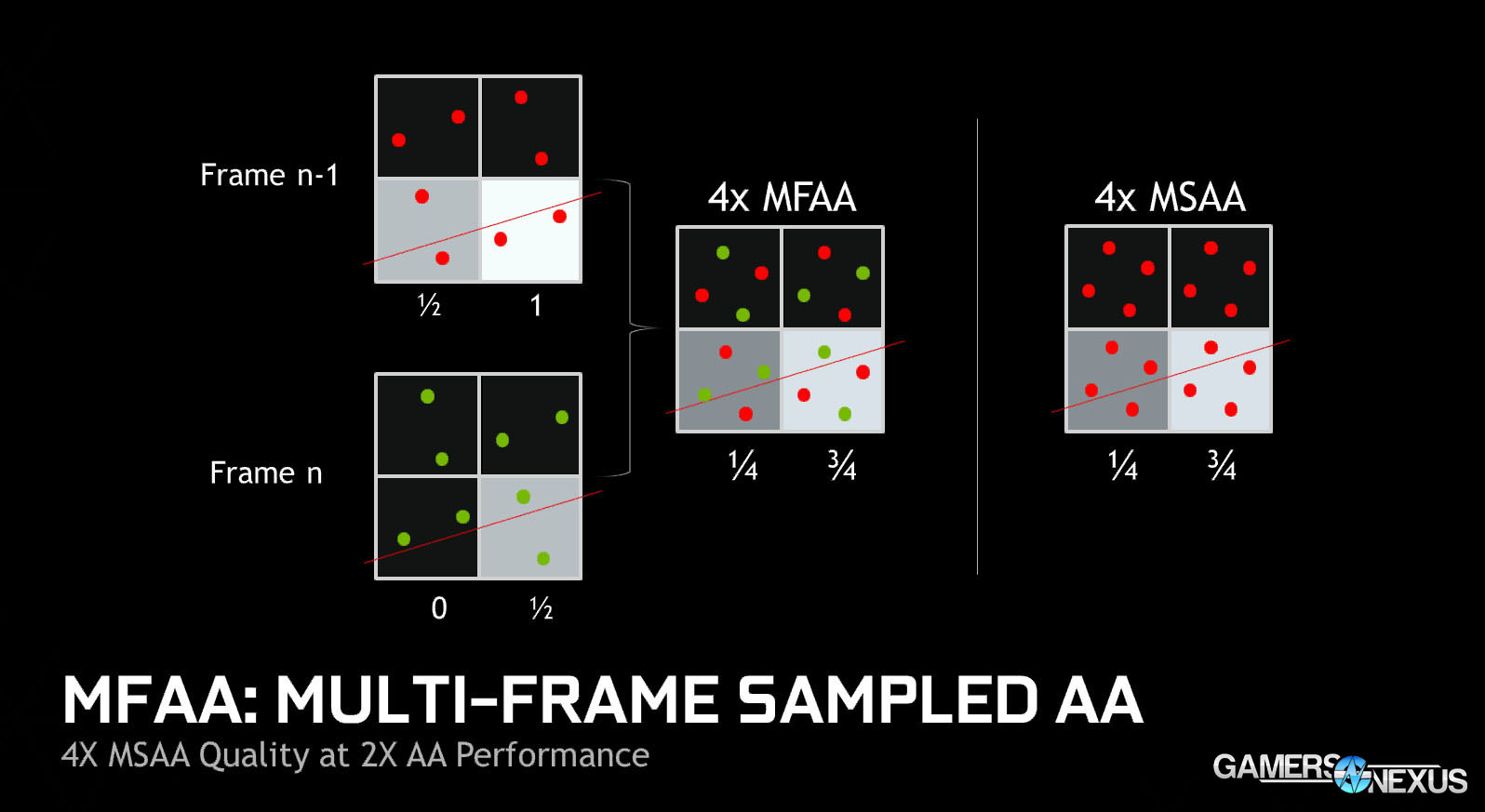

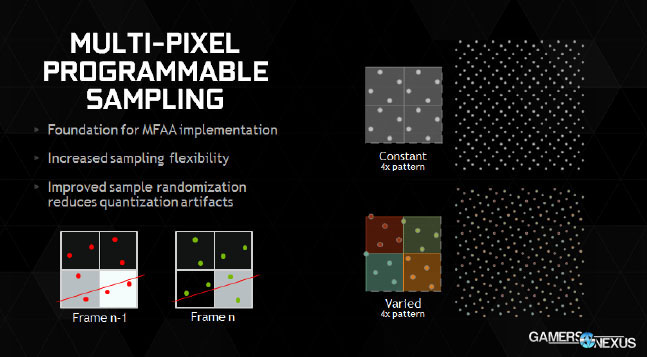

Multi-Frame Sampled Anti-Aliasing varies anti-aliasing sample patterns to reduce load by analyzing the image across multiple frames, rather than collecting all samples on a single frame (which is what MSAA does). This is more applicable to the likes of Battlefield 4, Crysis 3, and similarly high-fidelity titles.

Let’s break this down using the industry-wide MSAA that’s found in most games. With 4xMSAA, you’re taking what can be thought of as four color samples per pixel; 8xMSAA would be eight samples per pixel; 16xMSAA would be sixteen samples per pixel, and so on. It becomes apparent why anti-aliasing is so abusive to the GPU when we consider that each frame has millions of pixels (1920 x 1080 = 2,073,600 pixels). Higher sample values improve accuracy of color when drawing the pixel because we can point at more locations on the object; if there’s a piece of geometry that has some color change going on, sampling the geometry numerous times in the applicable location will ensure a smoother transition between colors when the final frame is drawn. 0xAA will give us a much harder edge to things, whereas 4x-16x AA helps create a more visually-appealing and smoother image.

Let’s look at an image:

The above is what we’ll use to explain MFAA. On the right side, we have the relatively dominant MSAA that you’ve all likely seen within game settings at some point. Each square within the larger block is a pixel. The dissecting red line can be thought of as a piece of geometry that is obscuring our view of an object (or the borderline of a piece of geometry that the GPU is drawing).

With 4xMSAA, the GPU is taking four samples per pixel and then determining a color value for that pixel. For the top two pixels, all four samples are collected within a black area; that pixel is drawn as black by the GPU. The bottom left pixel has one sample outside of the geometry being analyzed (think of this as “white” for simplicity) with three samples on the “black” part of the pixel. That pixel – because 3/4 samples are on black and 1/4 is on white – is drawn as an accurate dark gray. The grayscale output in this simplified example could be even more granular with a higher sample value. The bottom right pixel is the opposite story: 3/4 samples land on white (beneath our slicing geometry line) and 1/4 lands in black. This pixel is drawn as mostly white.

The entire process of collecting four samples per pixel is GPU-intensive, which is why AA is one of the first things to drop in order to gain performance. By sampling millions of pixels per frame, we’re bogging down the GPU with requests that could hamper framerate. The best way to mitigate this impact is to decrease the sampling value – to 2x, for example.

All of this is done on a frame-by-frame basis. Here’s another image:

Focus on the left side this time. Notice that now we have two frames being sampled – frame n and frame n-1. MFAA samples frame n two times per pixel and does the same for frame n-1, but in different locations; you’ll notice that between the two frames, we’re sampling a total of four times and doing so in the same sample locations as 4xMSAA. Maxwell then blends frame n and n-1 together, producing the 4xMFAA set of four pixels. The 4xMFAA and 4xMSAA blocks are essentially the same image, so image quality doesn’t necessarily inherently improve just by using MFAA. It will improve indirectly, though: 4xMFAA would allegedly have performance effectively equivalent to 2xMSAA, it’s just spaced out over two frames to reduce load on the GPU. This means that users would be able to run higher MFAA values than MSAA values, indirectly improving graphics quality.

Maxwell Technology: Dynamic Super Resolution (DSR) Scaling

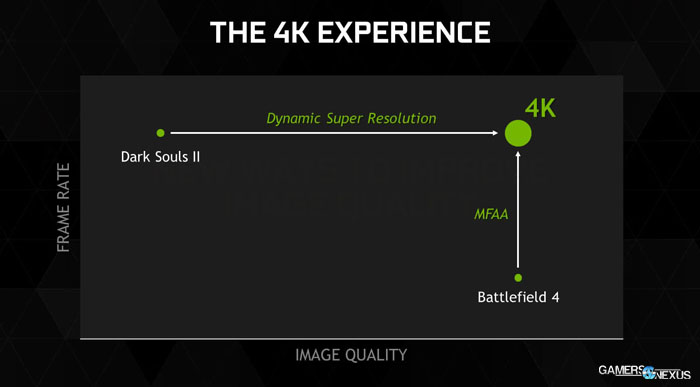

NVidia opened its DSR presentation with a Dark Souls II screenshot, stating simply: “Making it go faster isn’t really going to do you any good,” indicating the already high-FPS on the moderate-looking RPG. The game is already ‘capped’ for performance. More frames won’t add much at this point, so nVidia is instead seeking to improve visual quality. NVidia went on to highlight that most of its gamers (~95%!) are still using 1080 or 1440 displays.

Maxwell is capable of what nVidia calls “Dynamic Super Resolution,” which is a specialized and better-performing alternative to super-sampling. DSR renders the game at 4K resolution and then scales the output down to the native display resolution. The result is greater visual clarity and more defined edges / shadows. Here’s a pair of screenshots I took using Trine – the left is DSR (4K scaled), the right is a native 1080 output:

This all sounds an awful lot like super-sampling, though.

DSR renders out the frame at 4K resolution and filters it down onto the 1080p native display. Dynamic Super Resolution increases the sample rate – effectively a 4x sample rate increase – and then writes the 4K frame to the framebuffer. After the frame is written to memory, the GM204 uses a 13-tap Gaussian filter that’s built specifically for this task. The result is a higher image quality that eats performance fairly similarly to what a true 4K display would; there’s not much performance lost during the filtration process – the most notable performance hit is from the increased resolution.

Super-sampling also lacks an automated process. Someone has to hack together a fake resolution to force the GPU into rendering it, and once the display interface has been hacked to produce the higher resolution, a filter scales the image 1:1 to a 1080p display. This isn’t a lossless process. Much of the gain is potentially lost during the downscale and hacked output. NVidia tells us that their process equates less than a 1% performance hit after the obvious hit from 4K rendering.

In our testing of DSR, it appears to have very real, noticeable visual enhancements to gaming output. We tested the performance hit on the 980 below.

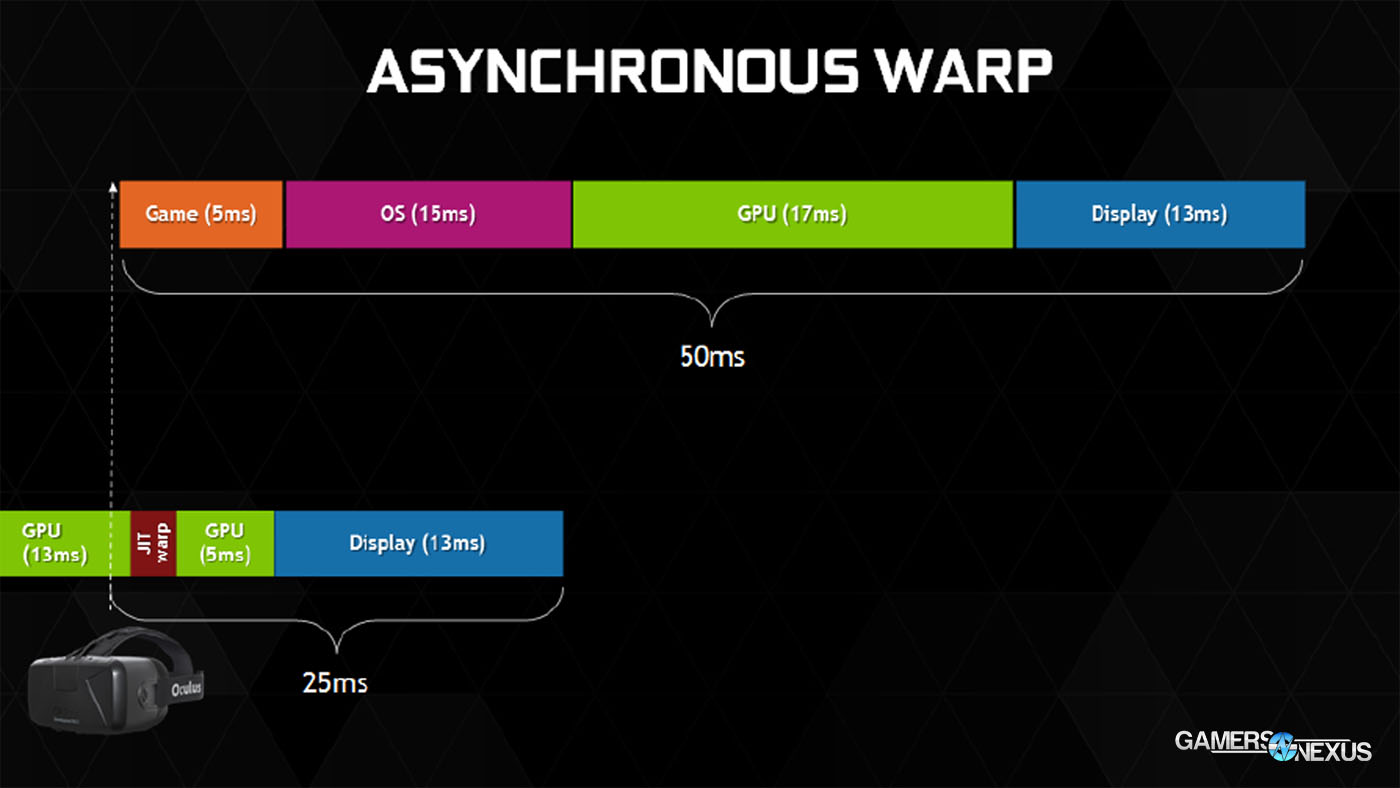

Maxwell Technology: VR Direct

I won’t dive too deeply into this one; we’ll save that for future articles that discuss VR more specifically. The short of VR Direct is that nVidia recognizes virtual reality as a significant force in the market right now. As such, the company has worked to tackle pervasive latency issues on the GPU-side when dealing with motion sensing and real-time movement through a VR space.

The trouble with latency in virtual reality – especially VR with motion sensing – is that it can produce an internally jarring side effect in the brain, eyes, and inner ear when the screen (output) does not match the user movement (input) precisely. This is where virtual reality sickness arises.

Maxwell architecture is now deploying “asynchronous warp” to aid in latency reduction. You can think of the rendered environment as having a sort of slight “buffer” around it; when the users moves his or her head, Maxwell adjusts the frame in-flight without re-rendering the entire frame. This makes it a sort-of pseudo-predictive technology that reduces latency by about 50%, from what nVidia told us (we cannot test VR without the right hardware).

Continue to the final page for our video card test methodology and 980 / GM204 GPU benchmark.

Test Methodology

Our standard test methodology applies here. For thermal benchmarking, we deployed FurMark to place the GPU under 100% load while logging thermals with HW Monitor+. FurMark executed its included 1080p burn-in test for this synthetic thermal benchmarking. In FPS and game benchmark performance testing, we used the following titles:

- Metro: Last Light Benchmark.

- GRID: Autosport Benchmark.

- Battlefield 4 Gameplay.

- Titanfall Gameplay.

- Watch_Dogs Gameplay.

FRAPS was used to log frametime and framerate performance during a 120 second session. FRAFS was used for analysis. Tests were conducted three times for parity.

NVidia 344.07 beta drivers were used for all tests conducted on nVidia's GPUs. AMD 14.7 drivers were used for the AMD cards.

| GN Test Bench 2013 | Name | Courtesy Of | Cost |

| Video Card | (This is what we're testing). | NVIDIA. | $550 |

| CPU | Intel i5-3570k CPU Intel i7-4770K CPU (alternative bench). | GamersNexus CyberPower | ~$220 |

| Memory | 16GB Kingston HyperX Genesis 10th Anniv. @ 2400MHz | Kingston Tech. | ~$117 |

| Motherboard | MSI Z77A-GD65 OC Board | GamersNexus | ~$160 |

| Power Supply | NZXT HALE90 V2 | NZXT | Pending |

| SSD | Kingston 240GB HyperX 3K SSD | Kingston Tech. | ~$205 |

| Optical Drive | ASUS Optical Drive | GamersNexus | ~$20 |

| Case | Phantom 820 | NZXT | ~$130 |

| CPU Cooler | Thermaltake Frio Advanced | Thermaltake | ~$65 |

The system was kept in a constant thermal environment (21C - 22C at all times) while under test. 4x4GB memory modules were kept overclocked at 2133MHz. All case fans were set to 100% speed and automated fan control settings were disabled for purposes of test consistency and thermal stability.

A 120Hz display was connected for purposes of ensuring frame throttles were a non-issue. The native resolution of the display is 1920x1080. V-Sync was completely disabled for this test.

The video cards tested include:

- AMD Radeon R9 290X 4GB (provided by CyberPower).

- AMD Radeon R9 270X 2GB (we're using reference; provided by AMD).

- AMD Radeon HD 7850 1GB (bought by GamersNexus).

- AMD Radeon R7 250X 1GB (equivalent to HD 7770; provided by AMD).

- NVidia GTX 780 Ti 3GB (provided by nVidia).

- NVidia GTX 780 3GB x 2 (provided by ZOTAC).

- NVidia GTX 770 2GB (we're using reference; provided by nVidia).

- NVidia GTX 750 Ti Superclocked 2GB (provided by nVidia).

Note that although we’d love to do native 4K testing, we don’t presently have test equipment available to do so. We did test DSR at 4K resolution with the 980 (below), though, and this should theoretically only be about 1% slower in performance than a native 4K display.

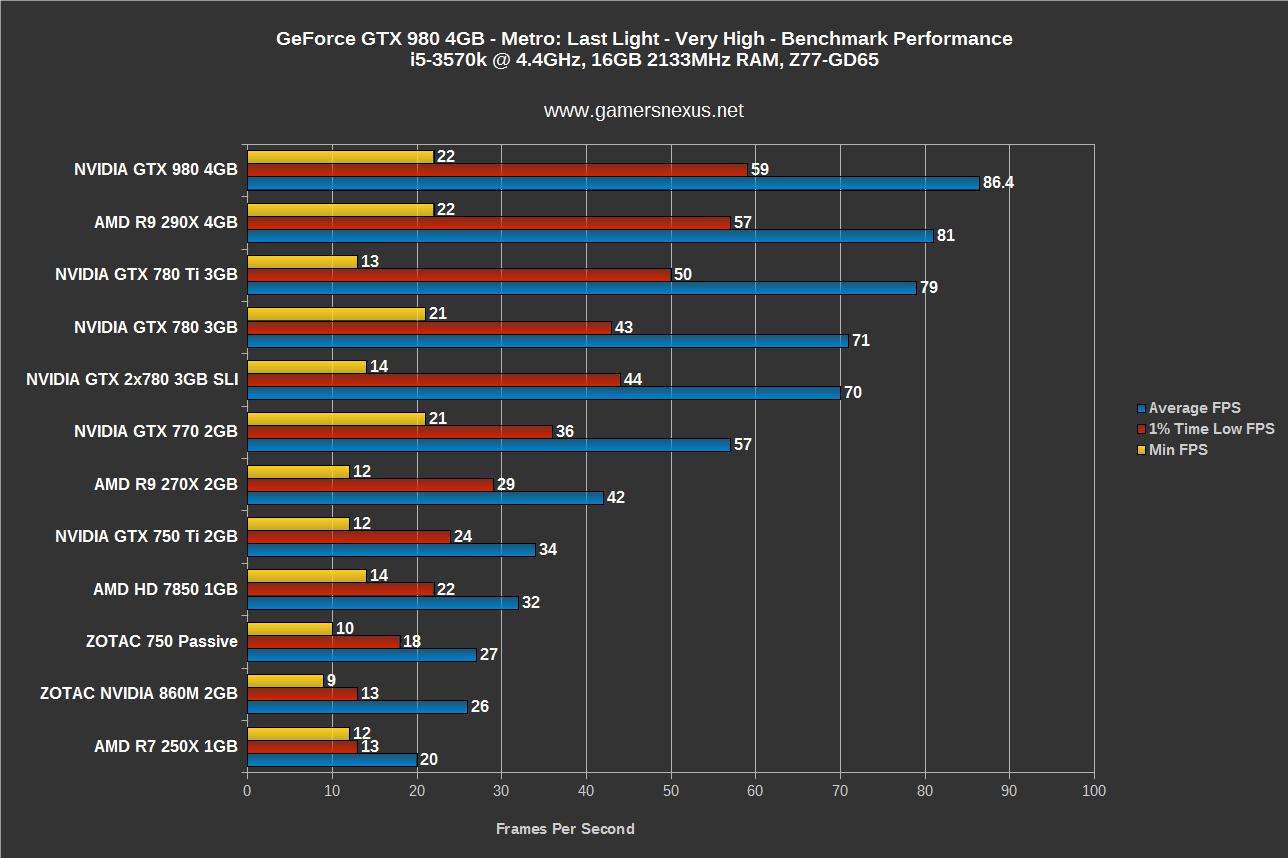

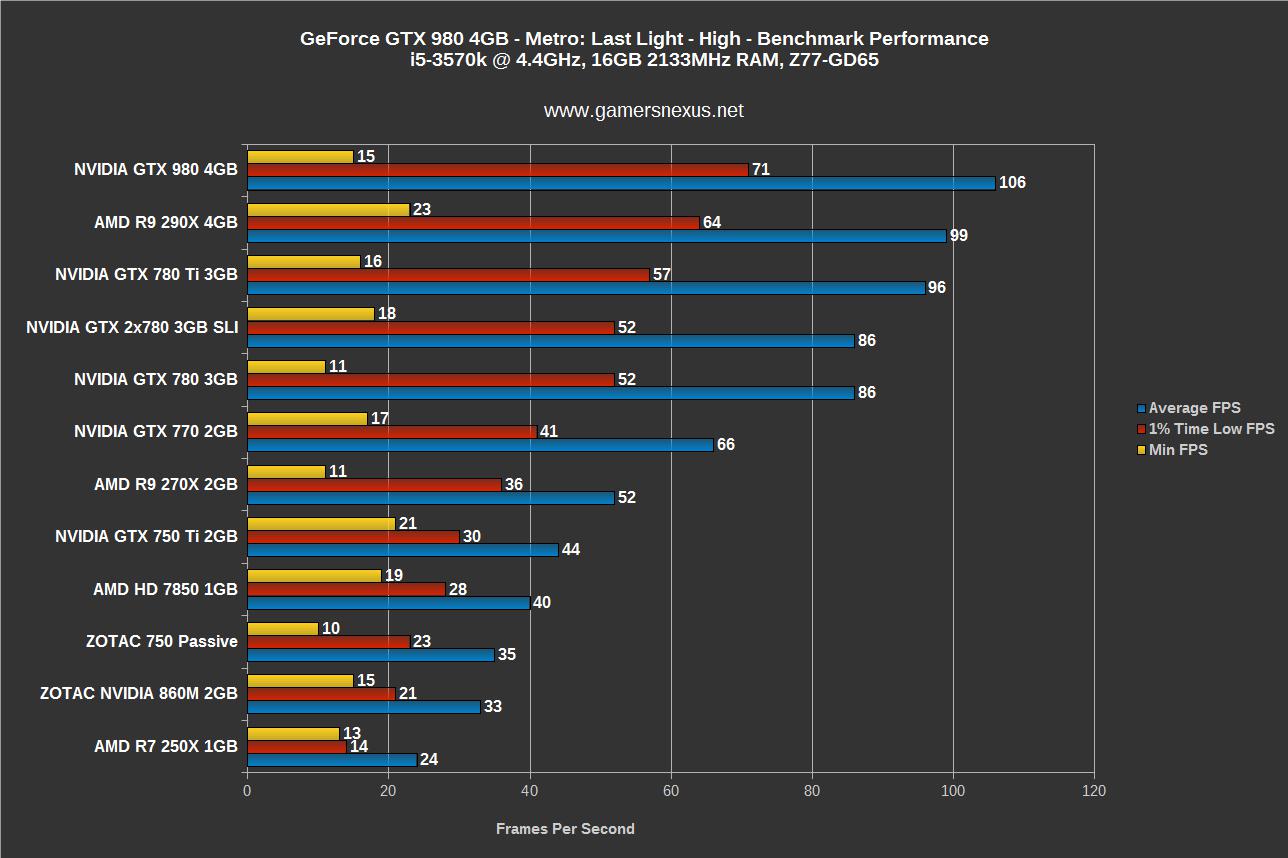

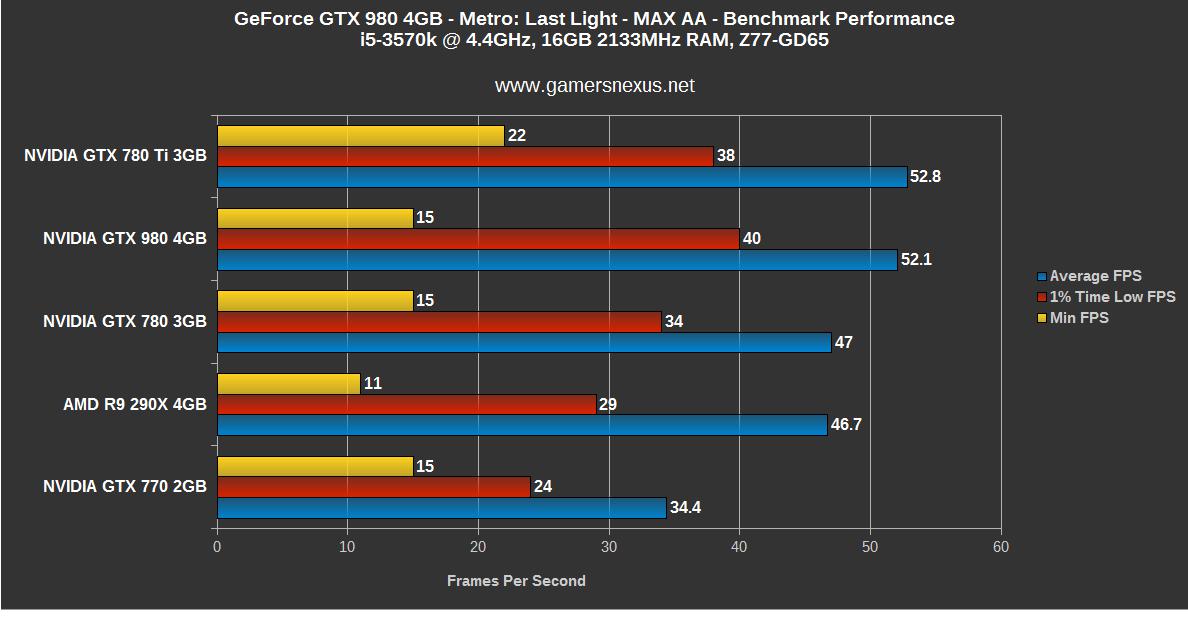

Metro: Last Light FPS Benchmark – GTX 980 vs. 780 Ti, 290X, 770, & More

The first chart shows M: LL on very high settings with high (max) tessellation. AA and AO are not configured as high as in the below chart. The second chart shows lower (high) settings for comparison purposes.

The next chart (right) shows only high-powered GPUs running M: LL at maximum settings with max AA / AO tech enabled. Left shows high settings.

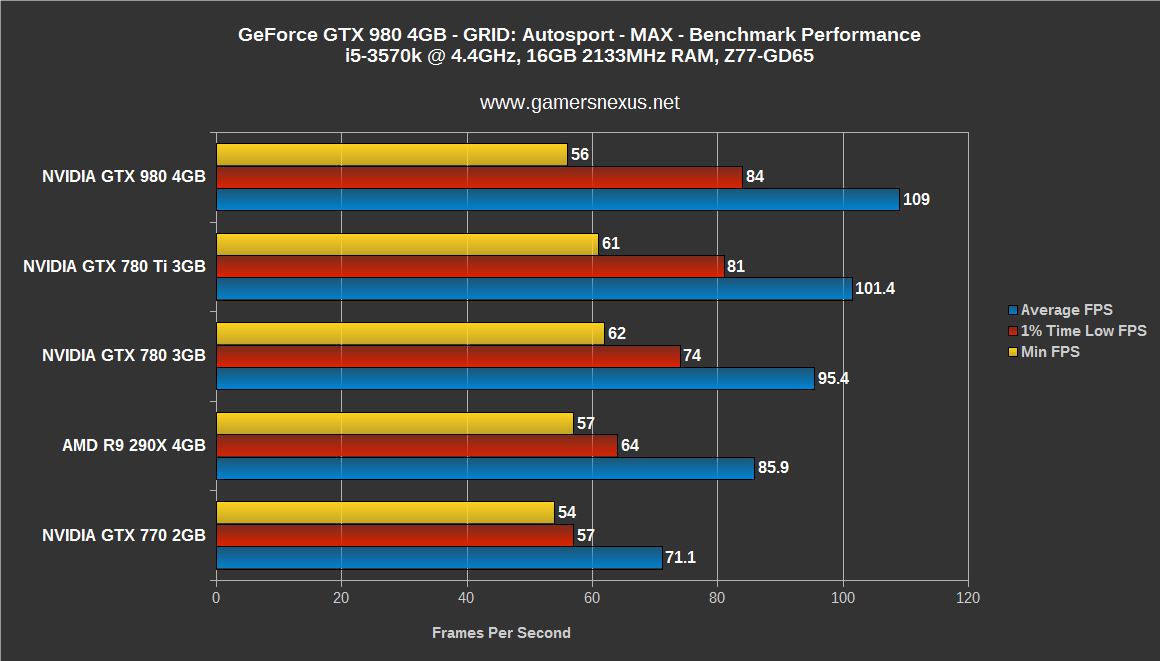

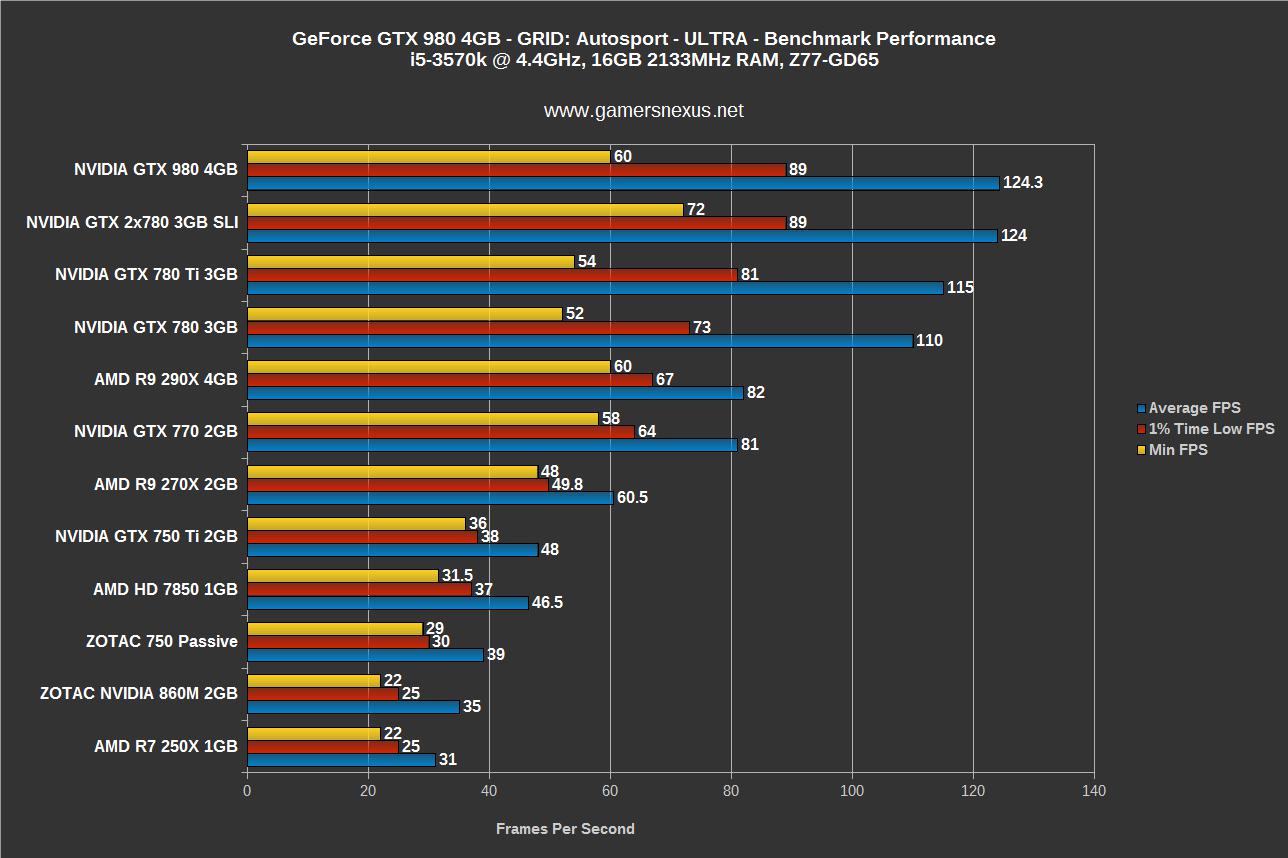

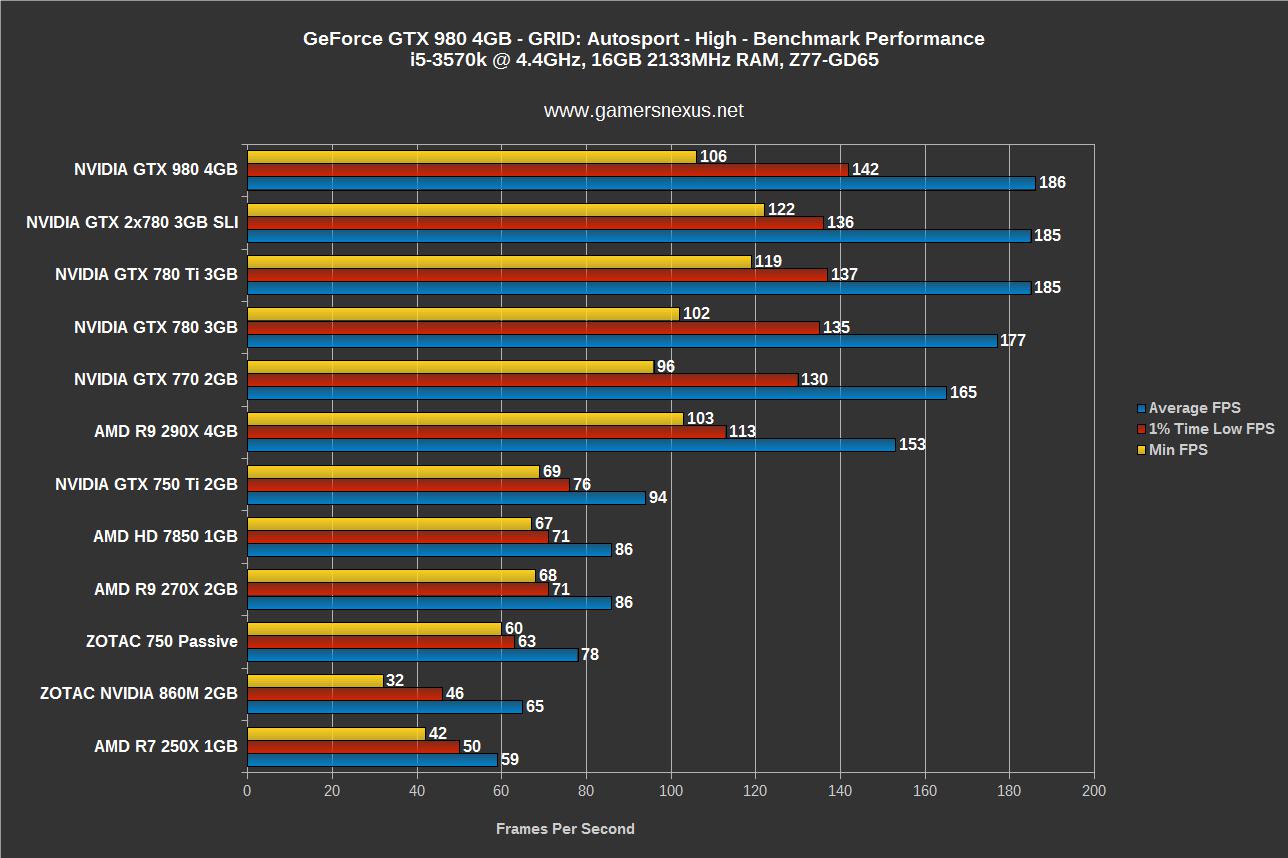

GRID: Autosport FPS Benchmark – GTX 980 vs. 780 Ti, SLI 780s, 290X, 770, & More

The first chart shows maximum settings on the high-end GPUs, including max AA (16x). The next showcases Ultra performance, with the final chart showing only High settings.

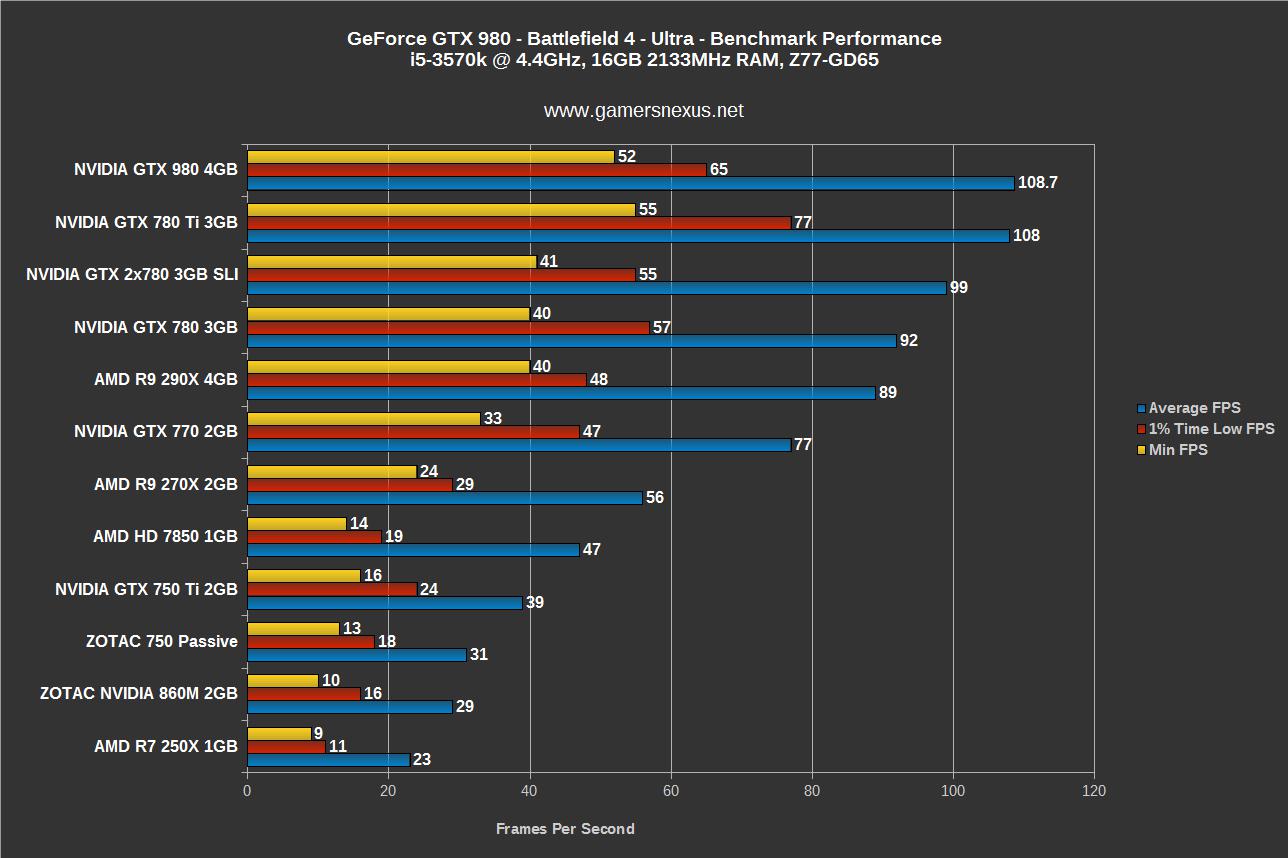

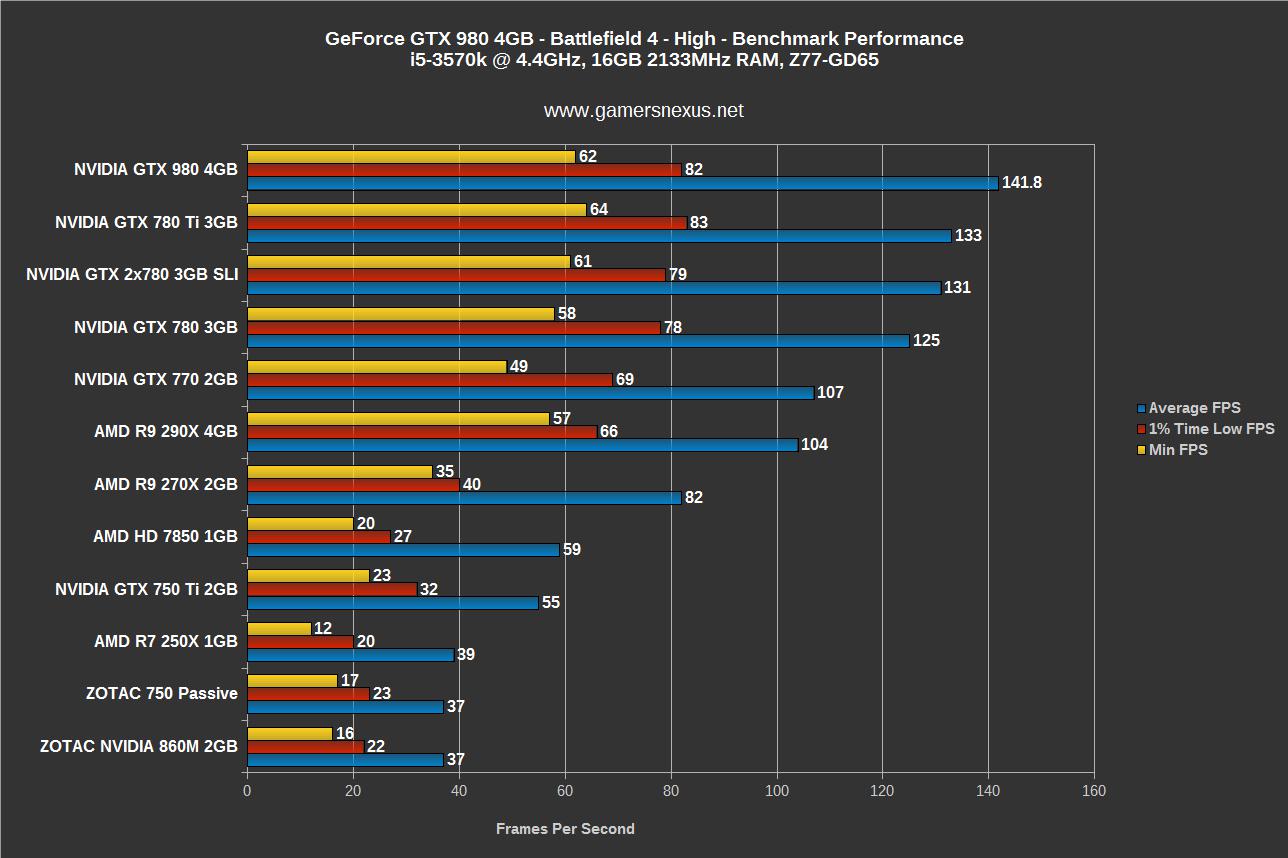

Battlefield 4 FPS Benchmark – GTX 980 vs. 780 Ti, SLI 780s, 290X, 770, & More

With Ultra settings, the 780 Ti and 980 are within margin of error of each other – effectively identical in average FPS. High sees the 980 pulling away pretty hard, which potentially indicates that most of the 980’s throttling is happening with AA enabled; maybe this will be resolved when MFAA goes live.

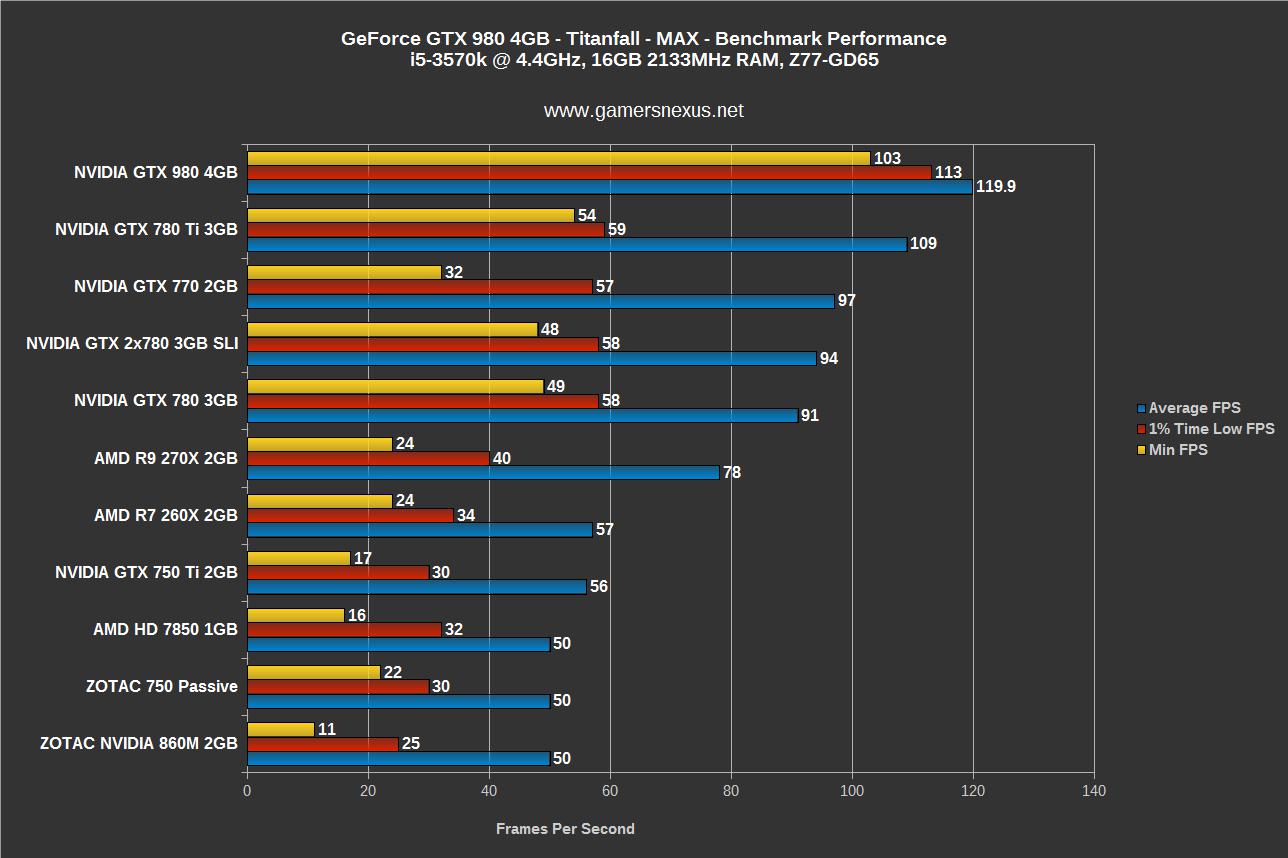

Titanfall FPS Benchmark – GTX 980 vs. 780 Ti, SLI 780s, 770, & More

We benchmarked Titanfall at launch. The game is capped by the monitor’s refresh rate, so 120FPS is the max possible throughput. The 980 easily hits 120FPS and manages to rocket ahead in 1% low and 0.1% (minimum) FPS marks.

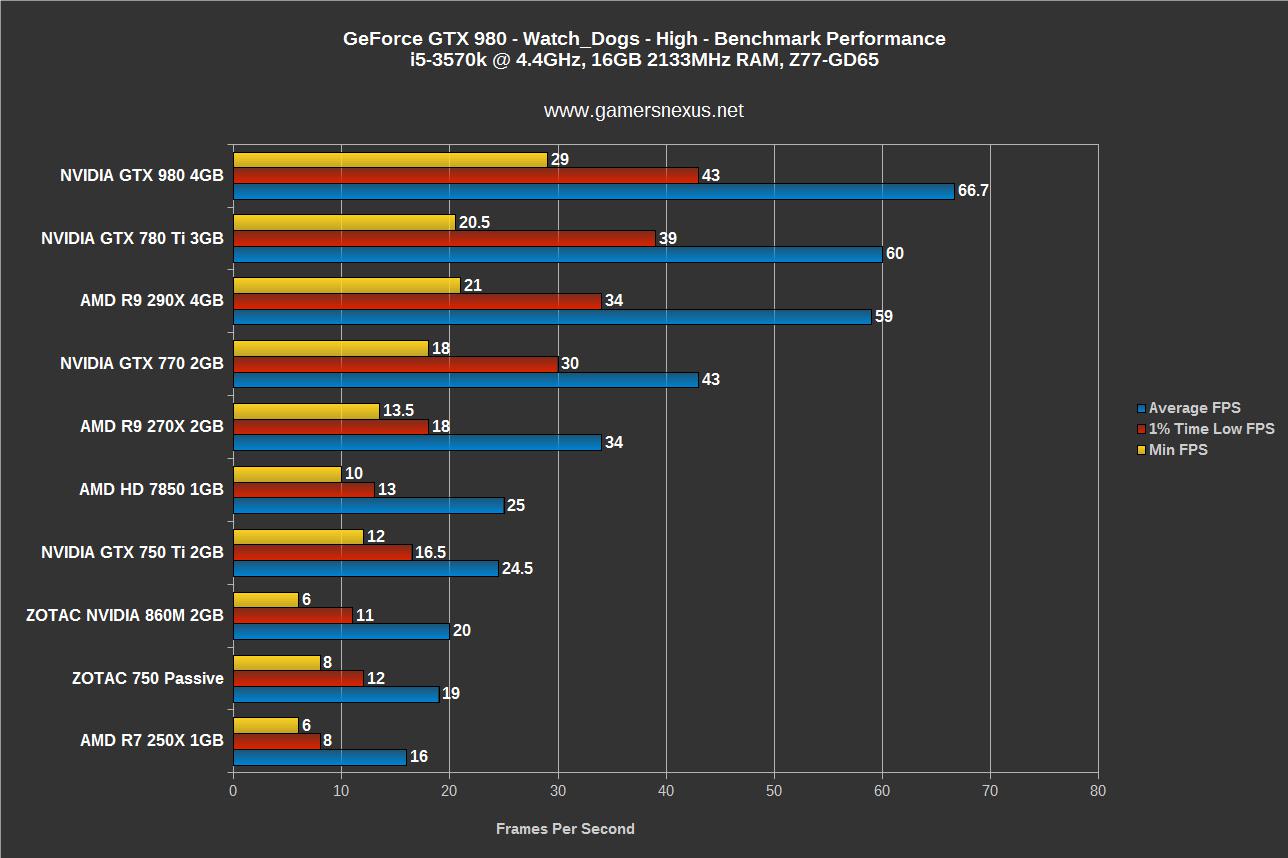

Watch_Dogs FPS Benchmark – GTX 980 vs. 780 Ti, SLI 780s, 290X, 770, & More

We called Watch_Dogs one of the “worst-optimized games we’ve ever benchmarked.” That shows through here. Regardless, the 980 is one of the proud few cards that can retain a playable FPS on “High” settings with the game.

4K Gaming – Dynamic Super Resolution (DSR) – GTX 980 FPS Benchmark

This chart strictly shows the performance of the GTX 980 with DSR (at 4K) enabled. It’s clear that games need to be optimized well for DSR to be a viable setting, especially when looking at how heavily Trine beats the 980 up (the 980 runs Trine at about 100FPS average without DSR). DSR is meant more for games like Divinity, Dark Souls II, and other lower-end games; GRID does surprisingly well and would be playable with tweaks.

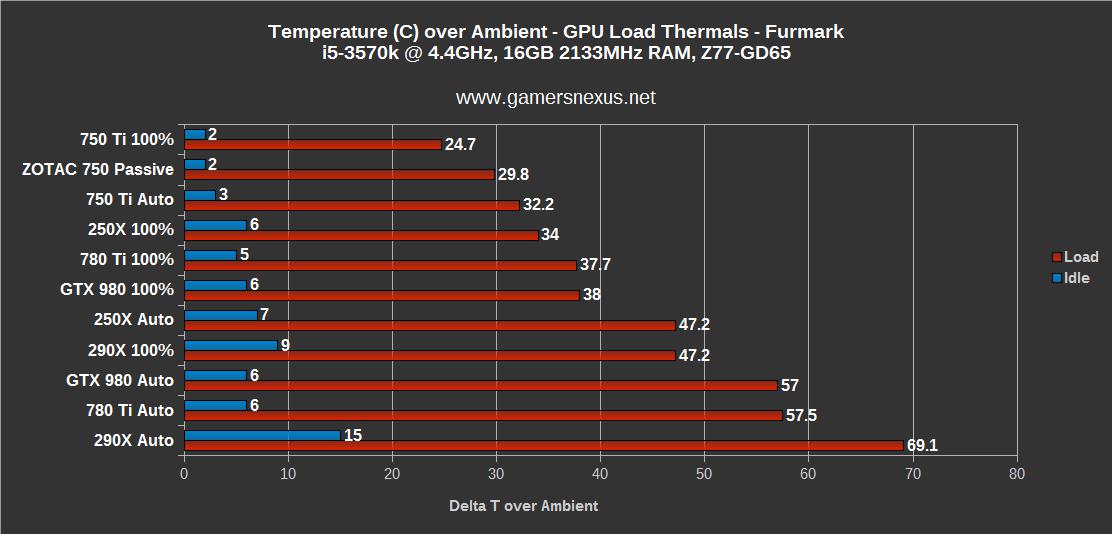

GTX 980 Thermal Performance & Temperatures

The thermals aren’t incredible given the impressively low TDP of the GTX 980, but that’s because it’s still outputting a lot of horsepower in terms of performance. NVidia’s GTX 980 clocks just slightly cooler than the 780 Ti. We test thermals with the fans at 100% for absolute performance, then move to “auto” for real-world performance.

Conclusion: NVidia’s GTX 980 is the Best Gaming GPU On the Market.

Hands-down.

We’ve still got a lot of architectural explaining and digging to do on Maxwell (be sure to follow us on twitter / facebook / YouTube), but our conclusive game FPS testing definitively proves that nVidia’s GTX 980 is the best-performing sub-Titan video card out there. The MSRP of the GTX 980 hovers at $550 – damningly low if you’re AMD – and the GTX 970 brings up the mid-range at $330, with the GTX 760 shifting in price to $220 (competing against the R9 280).

Owners of the 780 Ti and 780 need not upgrade this instant – the GTX 980 looks more like a direct upgrade for GTX 680 users than the most recent Kepler users. That stated, nVidia plans to discontinue the GTX 780 Ti, 780, and 770 upon launch of the GTX 980.

The GTX 980 makes financial sense for high-end gaming configurations with an interest in proper single-GPU 4K gaming (you’d still have to reduce some settings, but it’s close) and 1080p users who’d like to run DSR. The 980 and 970 have impressive architecture behind them and, once everything’s fully rolled-out, we’re hopeful that the tech sees rapid adoption in driver packages and games.

Note that the GTX 780 Ti may have advantages in COMPUTE or render applications -- we have not yet tested either of these use case scenarios due to time and scope. The focus of this article was entirely the GTX 980 as it pertains to gaming.

The video card will be available for purchase at 12:00am PST on 9/19.

- Steve “Lelldorianx” Burke.