AMD+NVIDIA Multi-GPU DirectX12 Benchmark: R9 390X + GTX 970 in 'SLIFire'

Posted on

Ashes of Singularity has become the poster-child for early DirectX 12 benchmarking, if only because it was the first-to-market with ground-up DirectX 12 and DirectX 11 support. Just minutes ago, the game officially updated its early build to include its DirectX 12 Benchmark Version 2, making critical changes that include cross-brand multi-GPU support. The benchmark also made updates to improve reliability and reproduction of results, primarily by giving all units 'god mode,' so inconsistent deaths don't impact workload.

For this benchmark, we tested explicit multi-GPU functionality by using AMD and nVidia cards at the same time, something we're calling “SLIFire” for ease. The benchmark specifically uses MSI R9 390X Gaming 8G and MSI GTX 970 Gaming 4G cards vs. 2x GTX 970s, 1x GTX 970, and 1x R9 390X for baseline comparisons.

Ashes of Singularity – DirectX 12 Benchmark with R9 390X & GTX 970

What is “Explicit Multi-GPU” in DirectX 12?

Ashes of Singularity runs on Oxide Games' “Nitrous” 4th-gen engine, which takes a job-based approach to task management. Most of the industry's ubiquitous engines, CryEngine included, assigned tasks to specific threads. As an example, a generic CryEngine game might spawn a thread for physics processing (CPU), one for rendering, one for game logic or AI, one for audio, et cetera, and this approach creates an imbalanced workload distribution that's exacerbated on threads which receive the most intensive job. That's why you'll often see games push the overwhelming majority of their processing to Core 1, showing the most utilization in resmon or other monitoring tools.

The Nitrous engine takes this aforementioned “job-based” approach, something we've discussed with Chris Roberts. These new philosophies to task management are what ultimately free the CPU from its burden, allowing more even distribution of processing and enabling a less 'clogged' task pipeline. In the interview with Roberts, the CIG CEO behind Star Citizen told us about the roadmap to Dx12 and steps required to adequately build a game for the new API:

“Refactoring the low-end of the [Star Citizen] engine [for Dx12] will [also] work for Vulkan. They have similar engines, similar multi-core, multi-threading, multi-job paradigms. It's not just adding more jobs, it's also about refactoring some of the pipeline, how you deal with the data, how you organize your resources so you can be more parallel, which is how you get the real power of Dx12 or Vulkan. You can take a Dx11 renderer and make it Dx12 pretty quickly. You're not going to get the benefit of Dx12 or the Vulkan stuff until you do more fundamental refactoring.

Leveraging Dx12 and its new Nitrous engine, Ashes of Singularity developers Oxide are also promoting the advantages of asynchronous processing. It's sort of like “parallelizing” an already parallel processor (the GPU). Despite being a parallel processing unit, the GPU often finds itself queue-blocked by some other job in the pipe – or even by the CPU, which translates draw calls on the older APIs. Just like modern web development philosophy, new APIs push asynchronous processing through the GPU pipeline so that fewer tasks 'wait in line,' thus speeding-up the entire render interval. The job approach of Nitrous means that tasks go to as many cores as possible at all times, without regard for any special “render core” or “physics core.”

Arbitrary AFR & “SLIFire”

Multi-GPU configurations within Ashes of Singularity's Dx12 environment operate using arbitrary alternate frame rendering (or AFR). Ashes can support both LDA (linked display adapter – or traditional SLI/CrossFire) and MDA (multi-display adapter). LDA works with SLI bridges and CrossFire bridges, MDA works by just running two display adapters without bridges, relying on the PCI-e bus for communication. MDA also allows for multiple, off-brand or off-model GPUs to function in somewhat harmonious processing.

For our tests, we used the MDA approach to combine an R9 390X and GTX 970 together – more just out of interest than anything – and LDA for “standard” SLI testing of 2x GTX 970s. A single R9 390X and single GTX 970 were also tested for baseline performance.

Use Case Limitations & the 'Why?'

A few housekeeping notes: The use cases for multiple, off-brand GPUs are exceedingly limited, as we discuss in the video. If you're building a new system, there's really absolutely no reason you'd want to buy – for example – an R9 Fury X and GTX 980 Ti and run them together. Almost no games presently support Dx12, fewer still support this type of multi-GPU configuration, and then there still has to be some dev-side support and testing for that configuration. It's not all just about drivers and APIs; if the game's QA team hasn't run the configuration, there's a good chance more bugs present themselves. We'd never recommend purposefully setting forth to build a system like we're testing – with two non-same GPUs – but there are some use cases where it could more naturally occur.

One of those use cases is the upgrade pipeline. Say you were running a GTX 970 and wanted to move to a 980 Ti – MDA and Dx12 allow both to be used without ditching the weaker card. In such an instance, you'd want the 980 Ti in your primary slot for those times when multiple, non-same GPUs are not supported (read: almost 100% of the time, currently).

This isn't really a test of something that's all that practical today, but the future is moving in the direction of officially supporting these haphazard hardware configurations – so it's good to validate and observe.

Test Methodology

We tested using our 2015 multi-GPU test bench. Our thanks to supporting hardware vendors for supplying some of the test components.

Press-access drivers from AMD were used for testing. NVidia's 361.91 drivers were used in this test, per nVidia's recommendation. Game settings were manually controlled for the DUT. Ashes of Singularity was run at several settings types, but the test today looks primarily at 1080p with “High” presets and 2xMSAA. We also ran Dx11 vs. Dx12 benchmarks, including 4K and “Crazy” settings tests, but those will be published in a near-future article.

We used the built-in benchmark course and data dump – which felt more like data dredging than analysis, at times – and ran the full course for 3-minutes. The course was executed multiple times for parity and then averaged.

| GN Test Bench 2015 | Name | Courtesy Of | Cost |

| Video Card | This is what we're testing! | - | - |

| CPU | Intel i7-5930K CPU | iBUYPOWER | $580 |

| Memory | Kingston 16GB DDR4 Predator | Kingston Tech. | $245 |

| Motherboard | EVGA X99 Classified | GamersNexus | $365 |

| Power Supply | NZXT 1200W HALE90 V2 | NZXT | $300 |

| SSD | HyperX Savage SSD | Kingston Tech. | $130 |

| Case | Top Deck Tech Station | GamersNexus | $250 |

| CPU Cooler | NZXT Kraken X41 CLC | NZXT | $110 |

NVidia Limitations with Dx12 in Ashes of Singularity

NVidia devices currently show almost no difference between Dx11 and Dx12 within Ashes of Singularity, something we currently suspect is attributable to a lack of driver support. Again, this will be further analyzed in the next article (Dx11 vs. Dx12). For now, know that nVidia's Dx12 support specifically within Ashes of Singularity is inconsequential, appearing nearly identical in performance to Dx11. At this time, there is no perceptible user advantage to using Dx12 with nVidia cards in Ashes of Singularity.

Ashes of Singularity Benchmark – DirectX 12 R9 390X + GTX 970 vs. SLI 970s

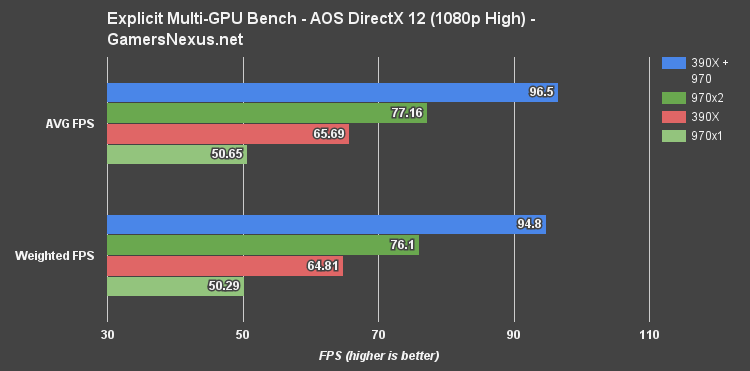

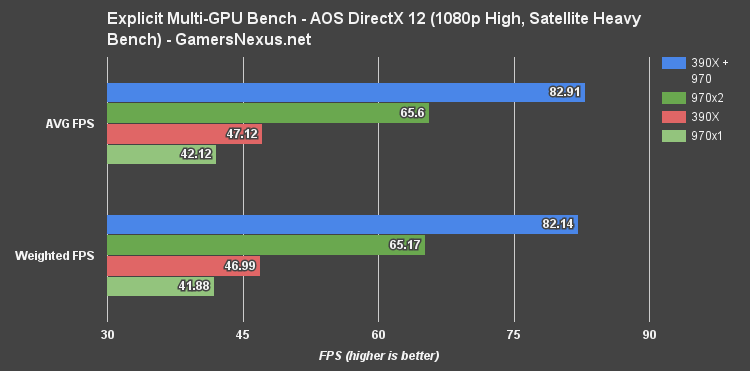

The first chart (above) shows 1080p/high AVG FPS and weighted FPS performance.

Looking strictly at Dx12 explicit multi-GPU, running a GTX 970 and R9 390X in pair yields a pretty significant gain over just a standalone 390X or standalone GTX 970. This is because the game is able to access features on both cards and utilize them more fully, creating a Frankenstein's monster version of the more familiar SLI or CrossFire setups.

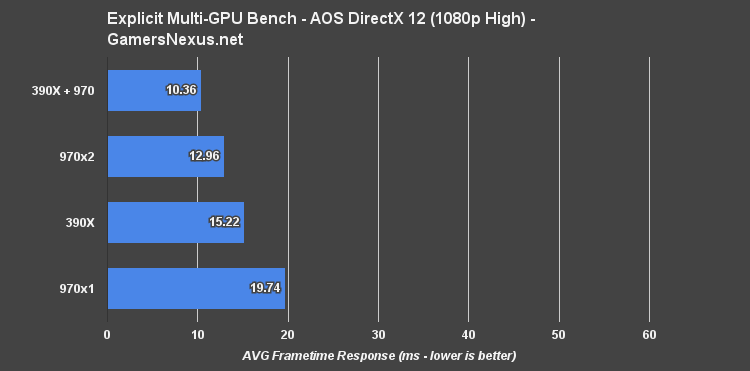

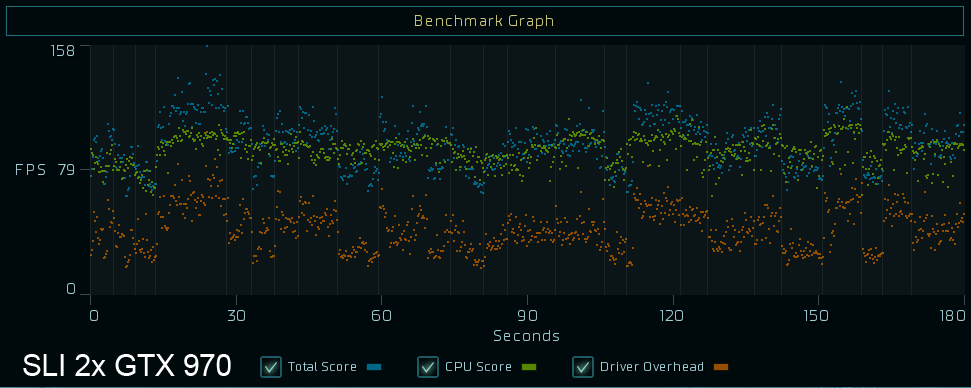

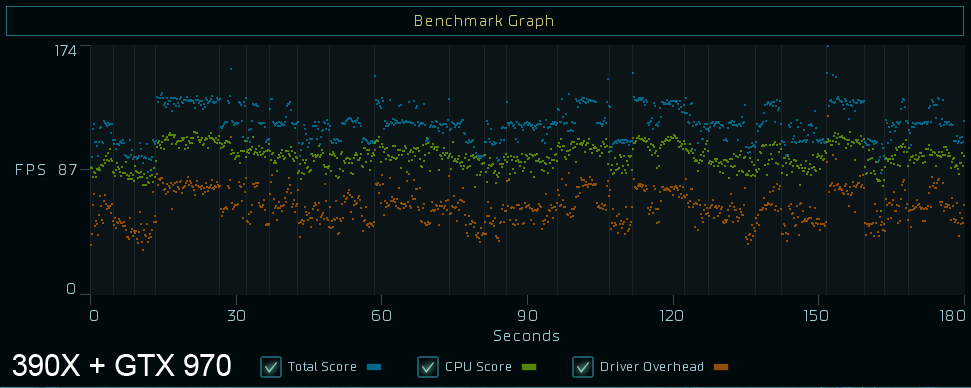

The average FPS for our 390X + 970 config at 1080p and High settings, with 2-tap MSAA, ran 96.5FPS on an impressive average frametime of 10.36ms – consistent enough that there's only a little visible tearing. The 390X standalone pushed 65.7FPS average, which is a 37% difference against the weird combo configuration we ran. A single GTX 970 runs at 50.65 FPS presently, about 26% slower than the 390X and 62% slower than the combo config. SLI GTX 970s were unimpressive at this time, running only 41.5% faster than the single GTX 970 and markedly slower than our unlikely AMD-nVidia combo.

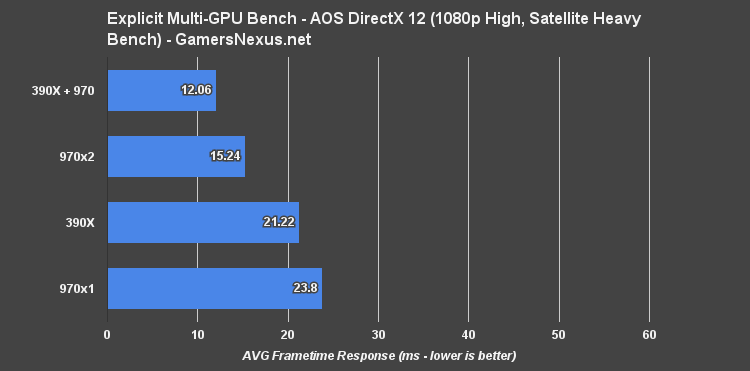

1080p, High - Satellite Shot (Heavy GPU Load)

Here are a few more charts from our 1080p/high benchmarking course, splitting-out the most heavily loaded portion of the bench ("Satellite Shot"):

Conclusion: Cross-Brand NVIDIA + AMD “SLIFire” Works

That's the primary conclusion, here: It works. Cross-manufacturer cards can be paired with one another in, at the very least, Ashes of Singularity with Dx12. The performance was actually reasonably improved over a standalone GTX 970 or standalone R9 390X, too; now, why you'd willingly do this as a user – not a benchmarker – is still somewhat nebulous.

The use cases are thin. Building a system ground-up with cross-brand GPUs would hamstring performance in nearly every game currently on the market, since you'd end up removing or disabling one for all those games. It's only in Dx12 games that support explicit multi-GPU where reasonable gains are seen. The most likely use case would be an upgrade pathway – e.g. running a GTX 970 or 390X presently, then upgrading to a 980 Ti or Fury X. That'd allow retention of the 'old' card, but it's still limited in game support.

Regardless, the unlikely pairing worked, worked well, and it seems that the rumors of memory pooling and cross-brand utilization are starting to solidify. AMD presently holds a clear advantage in Ashes of Singularity's Dx12 benchmark, likely a mix of driver and game optimization, while nVidia shows effectively no performance gain from Dx11 to Dx12 specifically in the Ashes benchmark. That's just one game, though – it doesn't mean this is true for all games.

Check back soon for Dx11 vs. Dx12 direct benchmarks to see a clearer picture of AMD vs. nVidia scaling performance on the APIs.

Editorial, Testing: Steve “Lelldorianx” Burke

Video Editing: Andrew “ColossalCake” Coleman